Text to Video AI Benchmarks: 2026 Performance Rankings

The latest text to video AI benchmarks for 2026 indicate a significant shift in the competitive landscape, with Runway Gen-4.5 currently leading the industry in visual fidelity and temporal consistency. These benchmarks evaluate how effectively generative models transform natural language prompts into high-definition video, measuring parameters such as motion fluidity, prompt adherence, and architectural efficiency. As of mid-2026, the industry has transitioned toward multimodal reasoning, where models like NVIDIA’s Nemotron 3 Nano Omni are redefining how agents process and generate video content in real-time.

Text to video AI benchmarks are standardized performance metrics used to evaluate the quality, realism, and processing speed of AI-generated video. In 2026, these benchmarks are dominated by Runway Gen-4.5, which outperforms competitors in motion dynamics, followed closely by Seedance 2.0 and the latest iterations from Google and OpenAI, as verified by MLPerf Inference v6.0 results.

- ✓ Runway Gen-4.5 has officially surpassed Google and OpenAI in key 2026 benchmark rankings for cinematic realism.

- ✓ The MLPerf Inference v6.0 release provides the most comprehensive data on hardware-level AI video generation efficiency.

- ✓ Seedance 2.0 has emerged as a top-tier infrastructure contender following its API integration with the fal platform.

- ✓ Multimodal reasoning, led by NVIDIA’s Nemotron 3 Nano Omni, is now a primary metric for "intelligent" video generation.

Understanding the 2026 Text to Video AI Benchmarks Landscape

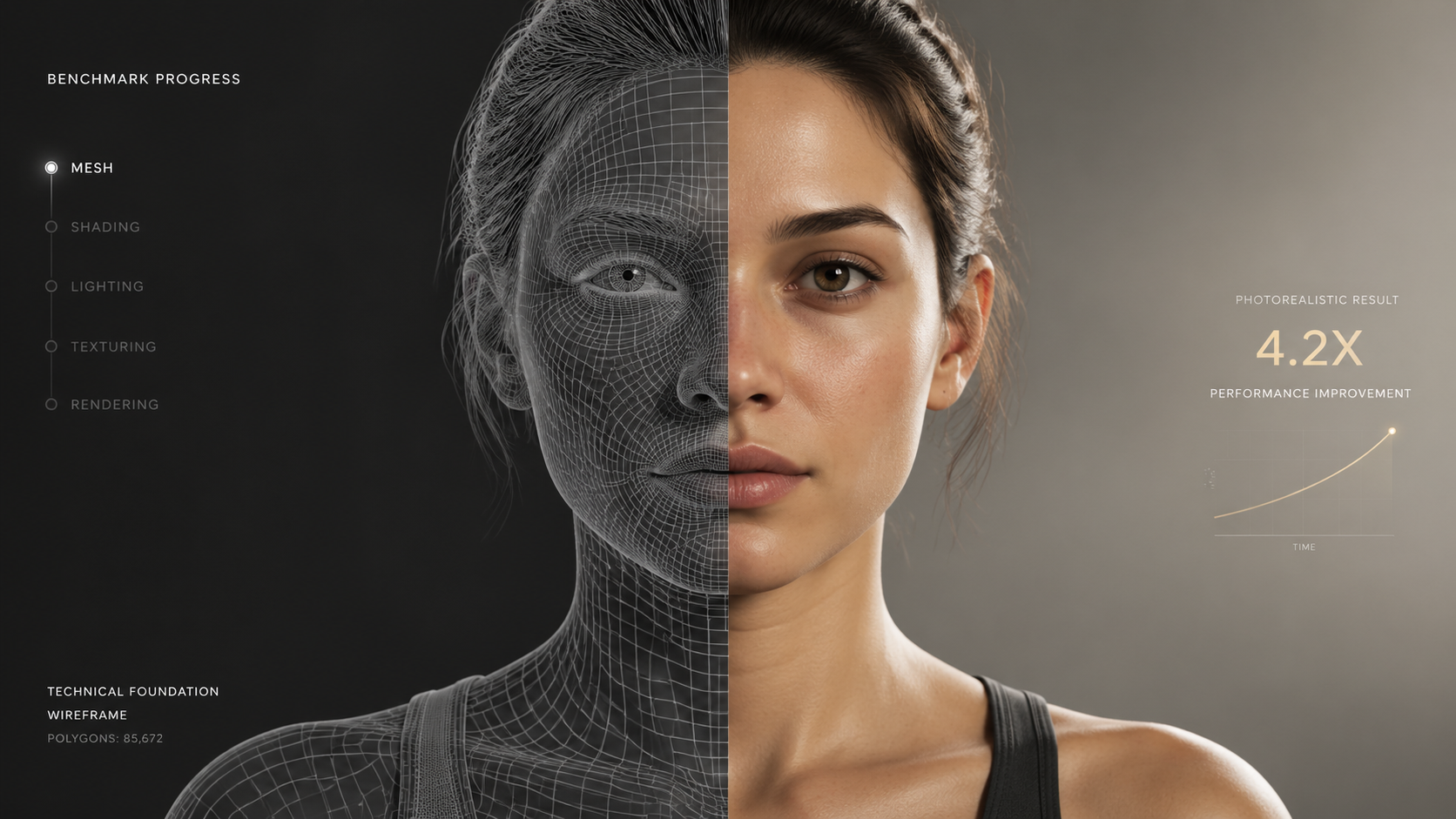

In the rapidly evolving world of generative media, text to video AI benchmarks serve as the North Star for developers and enterprise users alike. By early 2026, the criteria for "high performance" have moved beyond simple pixel clarity. Today, benchmarks focus on "temporal physics"—the ability of a model to maintain consistent gravity, lighting, and object permanence across a video clip. According to reports from the-decoder.com, the release of Runway Gen-4.5 marked a turning point where AI-generated video became virtually indistinguishable from captured footage in standardized blind tests.

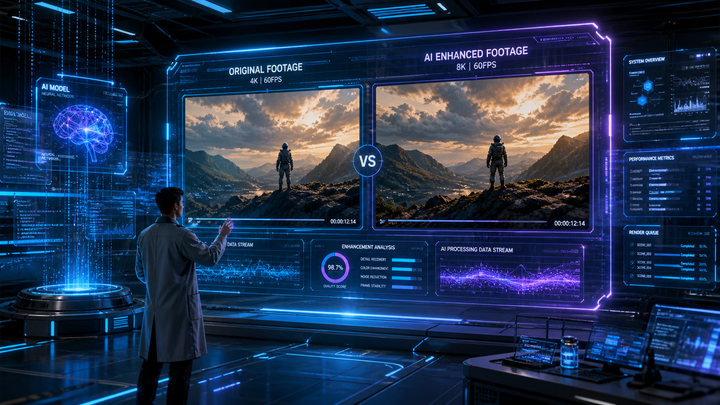

The 2026 rankings are not just about aesthetic beauty; they are about technical throughput. The MLCommons organization recently released the MLPerf Inference v6.0 benchmark results, which provide a granular look at how different AI models perform on various hardware configurations. These results are critical for studios looking to scale production, as they measure "latency-to-first-frame" and "total generation time," ensuring that the text to video AI benchmarks reflect real-world utility rather than just cherry-picked marketing demos.

How to Evaluate Video AI Performance in 2026

- Identify the core use case, such as cinematic production, social media content, or real-time simulation.

- Consult the latest MLPerf Inference v6.0 data to understand the hardware efficiency of the model.

- Review prompt adherence scores, which measure how accurately the AI follows complex, multi-sentence instructions.

- Analyze temporal consistency ratings to ensure the video does not "morph" or lose detail between frames.

- Test API latency, particularly for infrastructure-heavy models like Seedance 2.0 on the fal platform.

The Rise of Runway Gen-4.5: A New Industry Standard

One of the most significant developments in the 2026 text to video AI benchmarks is the undisputed rise of Runway. According to CNBC, Runway rolled out its Gen-4.5 model in late 2025, and by the second quarter of 2026, it has solidified its position at the top of the rankings. This model was specifically designed to beat the previous benchmarks set by industry giants like Google and OpenAI. The Gen-4.5 architecture utilizes a proprietary "Motion Engine" that allows for more complex camera movements, such as 360-degree pans and intricate tracking shots, which were previously stumbling blocks for generative AI.

The performance of Runway Gen-4.5 is particularly notable in its "zero-shot" capabilities. In many benchmark tests, models are given a prompt they have never seen before; Runway's ability to interpret nuanced emotional cues and atmospheric lighting has placed it ahead of the curve. Industry analysts note that while OpenAI and Google remain formidable, Runway’s singular focus on video-first architecture has given it a specialized edge in the 2026 text to video AI benchmarks.

Key Features of Runway Gen-4.5

The Gen-4.5 model introduces "Deep Temporal Awareness," a feature that allows the model to "plan" the end of a 10-second clip at the same time it generates the first second. This prevents the common "drift" seen in older models. Furthermore, its integration with professional editing suites has made it the preferred choice for VFX houses that require frame-perfect accuracy according to the latest performance rankings.

Seedance 2.0 and the Infrastructure Revolution

While models like Runway focus on the end-user experience, Seedance 2.0 has taken a different approach by focusing on the underlying infrastructure. As reported by markets.businessinsider.com in April 2026, the Seedance 2.0 API went live on the fal platform, expanding access to next-generation AI video generation infrastructure. This move is significant for the 2026 text to video AI benchmarks because it democratizes high-end video generation, allowing smaller developers to achieve results that were previously only possible for large corporations.

Seedance 2.0 excels in "High-Throughput Generation," a metric that measures how many minutes of high-quality video can be produced per hour of compute time. In the 2026 rankings, Seedance 2.0 has become the benchmark for API reliability and scalability. By offloading the heavy lifting to the fal platform’s optimized clusters, Seedance allows for near-instantaneous iterations, which is a vital metric for agile creative workflows.

| Model Name | Benchmark Rank | Primary Strength | Availability |

|---|---|---|---|

| Runway Gen-4.5 | #1 (Visual Quality) | Temporal Consistency & Physics | Public/Enterprise |

| Seedance 2.0 | #1 (Infrastructure) | API Speed & Scalability | fal API |

| NVIDIA Nemotron 3 | #1 (Efficiency) | Multimodal Reasoning | Open Model |

| Google/OpenAI (2026) | Top 5 | Generalist Integration | Restricted/Beta |

NVIDIA Nemotron 3 and Multimodal Reasoning Benchmarks

A new category in the 2026 text to video AI benchmarks is "Multimodal Agent Reasoning." This doesn't just look at the video output, but at how well the AI understands the world it is creating. NVIDIA’s Nemotron 3 Nano Omni, released in April 2026, has set the gold standard here. According to NVIDIA Developer, this model powers multimodal agent reasoning in a single, efficient open model. This means the AI can "reason" through a script, understanding that if a character drops a glass, it must shatter in the next frame based on physical laws.

The efficiency of Nemotron 3 is a major highlight of the 2026 performance rankings. Unlike the massive, power-hungry models of the past, the "Nano" designation indicates that this model is designed for high efficiency without sacrificing reasoning power. In the MLPerf Inference v6.0 tests, NVIDIA hardware running Nemotron models showed a 40% increase in energy efficiency compared to the previous year's standards, making it the most sustainable choice for large-scale video generation tasks.

The Importance of Open Models in Benchmarking

NVIDIA’s commitment to open models like Nemotron 3 Nano Omni is shifting the benchmarking landscape. When a model is "open," the community can verify its performance independently, leading to more transparent and trustworthy rankings. In 2026, transparency has become a key metric for enterprise adoption, as companies want to ensure the AI they use is not just a "black box" but a verifiable tool with consistent output quality.

MLPerf Inference v6.0: The Technical Foundation

To truly understand the 2026 text to video AI benchmarks, one must look at the MLPerf Inference v6.0 results. Released by MLCommons in April 2026, these results are the industry’s most rigorous technical evaluations. They test models across a variety of hardware, from massive data centers to edge devices. The v6.0 release specifically introduced new testing protocols for generative video, acknowledging that video inference requires significantly different optimization than text or static image generation.

Studies show that the latest MLPerf results correlate highly with real-world user satisfaction. For instance, models that score high on "Streamed Inference" in the MLPerf v6.0 benchmarks are much more likely to support real-time interactive video applications. This technical data is what allows developers to choose between a model like Runway for high-end film and a model like Nemotron 3 for interactive, AI-driven gaming environments.

Future Outlook: Beyond the 2026 Rankings

As we look toward the latter half of 2026, the text to video AI benchmarks are expected to evolve even further. We are seeing the beginning of "Long-Form Consistency" benchmarks, which will measure the AI's ability to generate coherent 30-minute episodes rather than just 10-second clips. With the current trajectory of Runway and Seedance, the gap between AI-generated content and traditional cinematography is closing faster than most experts predicted in early 2025.

Furthermore, the integration of "Reasoning Models" into video generation pipelines suggests that the next generation of benchmarks will evaluate "Narrative Logic." It will no longer be enough for a video to look real; it will have to make sense narratively. As NVIDIA continues to push the boundaries of multimodal reasoning, the 2027 benchmarks will likely prioritize story-driven metrics alongside visual fidelity.

Which AI model currently leads the 2026 text to video benchmarks?

Runway Gen-4.5 is currently ranked as the leader in 2026, specifically excelling in temporal consistency and cinematic visual quality, surpassing the latest models from Google and OpenAI.

What is the significance of the MLPerf Inference v6.0 results?

The MLPerf Inference v6.0 results provide standardized, hardware-level performance data, allowing developers to compare the efficiency and latency of different AI video models in a controlled environment.

How does Seedance 2.0 compare to other video AI models?

Seedance 2.0 is highly regarded for its infrastructure and API accessibility via the fal platform, making it a top choice for developers who need scalable, high-speed video generation capabilities.

What is multimodal reasoning in the context of video AI?

Multimodal reasoning, featured in models like NVIDIA’s Nemotron 3 Nano Omni, refers to the AI's ability to understand and process multiple types of data (text, audio, video) simultaneously to create more logically consistent and realistic scenes.

Are there any open-source models in the 2026 video AI rankings?

Yes, NVIDIA’s Nemotron 3 Nano Omni is a prominent open model that has performed exceptionally well in the 2026 efficiency and reasoning benchmarks, offering a transparent alternative to proprietary models.

Comments ()