Mango AI Text to Video Tool: 2026 Review & Full Guide

The mango ai text to video tool is a cutting-edge generative media platform released in early 2026 that allows users to transform written prompts into high-definition video content using advanced neural networks. By leveraging Meta’s proprietary "Mango" architecture, the tool streamlines the production of marketing videos, educational content, and social media clips through a simple text interface.

Mango AI is a comprehensive generative video platform that utilizes AI technology to convert text descriptions and static images into professional-grade videos. Developed as part of a broader push into visual AI, it features "talking photo" capabilities and high-fidelity motion synthesis, making it a primary solution for rapid content creation in 2026.

- ✓ Seamlessly converts text prompts into HD video clips using the 2026 Mango architecture.

- ✓ Features a specialized "Talking AI" tool to animate static images with realistic lip-syncing.

- ✓ Powered by Meta's latest AI models, specifically designed for high-temporal consistency.

- ✓ Offers a user-friendly interface suitable for both professional creators and beginners.

- ✓ Integrated with real-time rendering capabilities for instant visual feedback.

Understanding the Evolution of the Mango AI Text to Video Tool

As we navigate through 2026, the landscape of digital content has been fundamentally altered by the introduction of the mango ai text to video tool. Initially teased in late 2025 under the code-name ‘Mango’ by Meta, the tool has officially launched as a versatile suite for visual storytelling. According to a report by the WSJ in December 2025, the development of this model was aimed at bridging the gap between static image generation and fluid, cinematic video production.

The core technology behind Mango AI is not just about moving images; it is about understanding context. Unlike earlier iterations of video generators, the 2026 Mango AI model utilizes a deep understanding of physics and lighting to ensure that generated videos look natural. This evolution is timely, as the broader Text Analytics and generative market is projected to reach US$35.9 billion by 2033, according to data from openPR. The Mango AI tool stands at the forefront of this growth, providing a bridge between raw data and visual communication.

How to Use the Mango AI Text to Video Tool: Step-by-Step

- Access the Dashboard: Log in to the Mango AI portal and select the "Text to Video" module from the primary navigation menu.

- Enter Your Prompt: Type a detailed description of the scene you wish to create in the text input field. For better results, include details about lighting, camera movement, and subject actions.

- Select Aspect Ratio and Style: Choose between cinematic (16:9), vertical (9:16), or square formats, and select a visual style such as "Hyper-realistic," "3D Animation," or "Sketch."

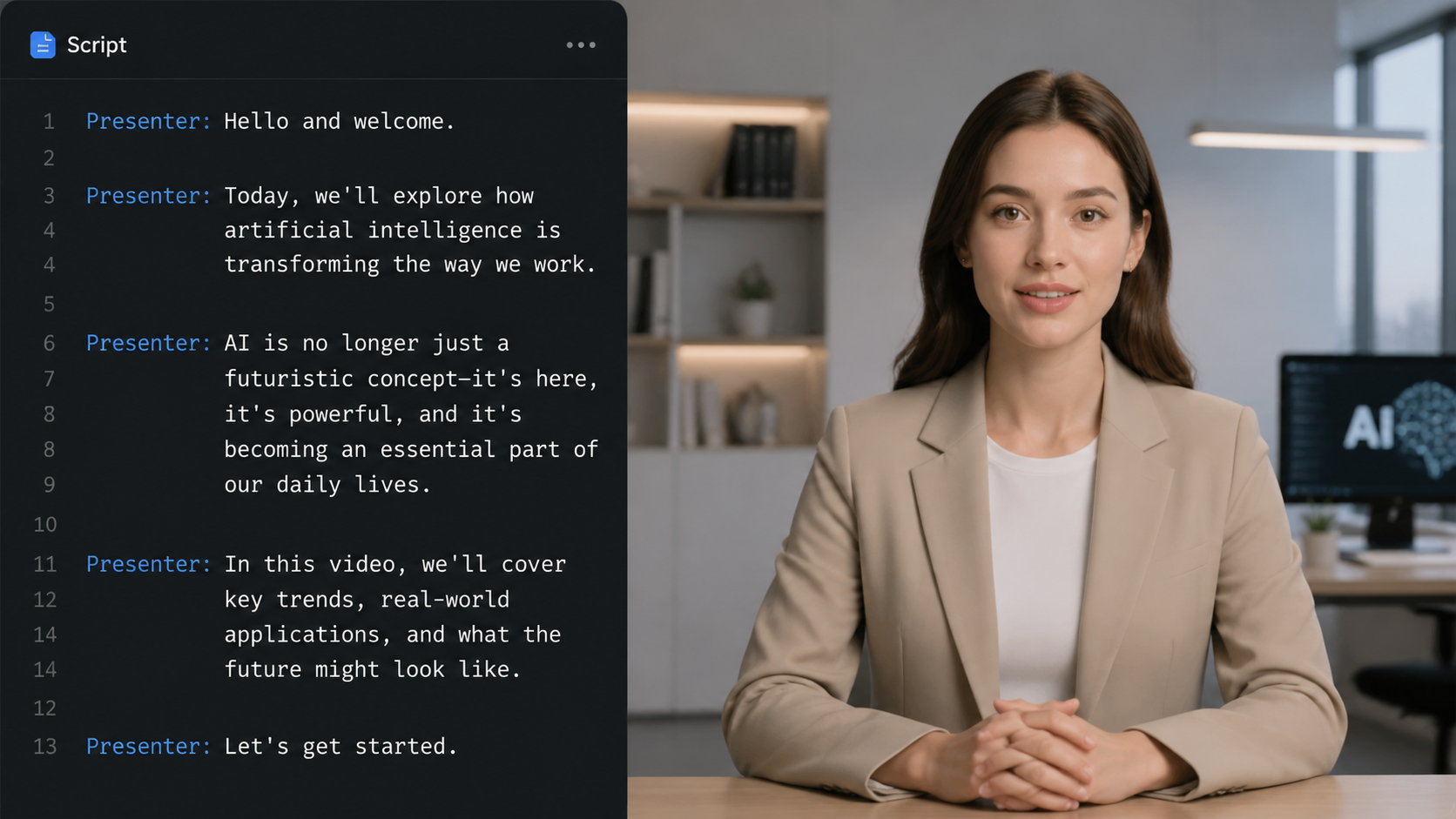

- Configure AI Avatars (Optional): If your video requires a narrator, use the "Talking AI" feature to upload a static image or select a pre-set avatar to deliver your script.

- Generate and Refine: Click the "Generate" button. Once the preview is ready, use the timeline editor to make minor adjustments to the pacing or text overlays before exporting in 4K resolution.

Core Features of the 2026 Mango AI Suite

The mango ai text to video tool is more than a simple converter; it is a full-scale production house driven by artificial intelligence. One of its most celebrated features, rolled out in mid-2025 and perfected in 2026, is the Talking AI tool. This feature allows users to bring static images to life, providing them with the ability to speak any text input with naturalistic facial expressions and synchronized lip movements. This has become a game-changer for corporate training and personalized marketing.

Furthermore, the integration of Meta’s ‘Mango’ model ensures that the video output maintains high temporal consistency. In the past, AI-generated videos often suffered from "morphing" or inconsistent textures between frames. The 2026 Mango AI engine solves this by using a reference-based video synthesis approach. As noted by WebWire in January 2026, this allows for the visualization of complex ideas that were previously too expensive or time-consuming to film manually.

Advanced Capabilities and Technical Specifications

| Feature | Mango AI Standard | Mango AI Pro (2026) |

|---|---|---|

| Maximum Resolution | 1080p HD | 4K Ultra HD |

| Max Video Length | 60 Seconds | 10 Minutes |

| Talking Photo Support | Basic (3 Avatars) | Unlimited + Custom Uploads |

| Processing Speed | Standard Queue | Priority Turbo Rendering |

| Commercial Rights | Limited | Full Commercial Usage |

The Impact of Mango AI on Content Strategy

Incorporating the mango ai text to video tool into a modern content strategy allows brands to scale their output without a proportional increase in budget. According to the Blockchain Council, the integration of AI models like Mango into decentralized platforms is also beginning to emerge, ensuring that creators have more control over their intellectual property and the metadata associated with their videos. This shift toward AI-assisted creation is no longer a luxury but a necessity for staying competitive in 2026.

The tool’s ability to interpret nuanced text prompts means that creative directors can prototype entire ad campaigns in minutes. Instead of waiting weeks for storyboards and test shoots, the Mango AI tool provides a "Reference to Video" capability. As reported by openPR in January 2026, this allows users to upload a single reference image or a style guide, which the AI then uses as a visual anchor for the generated video, ensuring brand consistency across all frames.

Key Benefits for Digital Marketers

- Cost Reduction: Eliminates the need for expensive sets, lighting crews, and actors for basic informational content.

- Rapid Iteration: Test multiple visual hooks for social media ads by simply changing a few words in the prompt.

- Global Reach: Automatically translate and lip-sync videos into over 40 languages using the built-in localization engine.

Technical Breakdown: How the Mango Model Works

The mango ai text to video tool operates on a diffusion-based transformer architecture. This specific model was designed to handle high-dimensional data, allowing it to render complex textures like water, hair, and fabric with unprecedented realism. In early 2026, Meta’s development team emphasized that the ‘Mango’ model was trained on a diverse dataset that prioritizes ethical sourcing and high-quality cinematics, distinguishing it from earlier open-source models.

One of the standout technical achievements of this tool is its "Motion Brush" feature. Users can highlight specific areas of a static image and describe the desired motion—such as "clouds drifting" or "steam rising from a coffee cup." This granular control is what sets Mango AI apart from "one-click" generators that offer little creative influence. By providing a reference to video tool powered by AI technology, Mango AI gives the user the role of a director rather than just a spectator.

User Experience and Interface Design

The 2026 version of the Mango AI interface has been redesigned for "flow-state" editing. The workspace is divided into a prompt zone, a real-time preview window, and an asset library. Because the tool is cloud-based, it leverages massive server-side GPU clusters, meaning users do not need a high-end computer to generate 4K video. This democratization of high-end video production is a core pillar of the Mango AI philosophy.

Future Outlook: Mango AI and the 2033 Market Projections

Looking ahead, the role of the mango ai text to video tool is set to expand even further. With the text analytics market expected to grow at a CAGR of 16.8% from 2026 to 2033 (Source: openPR), the demand for tools that can turn complex data and text into digestible video format will skyrocket. Mango AI is already positioning itself as more than a creative tool, but as a data visualization powerhouse.

We expect to see deeper integrations with VR and AR environments later this year. Imagine a scenario where a text prompt doesn't just generate a 2D video but a 360-degree immersive environment. Given the "Mango" model's origins within Meta's AI research labs, the transition to metaverse-ready video content seems like the logical next step for this technology. For now, the tool remains the most accessible and powerful text-to-video solution available for professional use.

Frequently Asked Questions about Mango AI

Is the Mango AI text to video tool free to use?

Mango AI offers a tiered pricing model. There is a free version with limited generation credits and watermarked exports, while the Pro and Enterprise tiers provide 4K resolution, unlimited "Talking Photo" credits, and full commercial rights.

Who developed the Mango AI video model?

The core "Mango" AI model was developed by Meta, as revealed in late 2025. It has since been integrated into various creative platforms to provide high-fidelity text-to-video and talking avatar services.

Can I use my own voice for the Talking AI feature?

Yes, the 2026 update to the Mango AI tool allows users to upload their own voice recordings or use "voice cloning" technology to create a digital version of their voice for use with AI avatars.

How long does it take to generate a video?

Generating a standard 15-second clip typically takes between 1 to 3 minutes depending on server load. The "Turbo" mode available for Pro users can reduce this time to under 30 seconds.

Is the content generated by Mango AI copyright-free?

Users on the Pro and Enterprise plans hold the commercial rights to the videos they generate. However, it is always recommended to check the latest terms of service as AI copyright laws continue to evolve in 2026.

Comments ()