New AI Video Generation Models: 2026 Trends & Tools

The landscape of digital content creation has been fundamentally reshaped this year as new ai video generation models reach a level of cinematic realism previously reserved for high-budget Hollywood studios. In 2026, the industry has transitioned from experimental short clips to high-definition, physics-compliant video production that integrates seamlessly into professional workflows. These advancements, led by major players like OpenAI, Alibaba, and ByteDance, allow users to generate complex visual narratives from simple text prompts or static images with unprecedented temporal consistency.

New AI video generation models are advanced machine learning systems designed to convert text, image, or video inputs into high-fidelity motion pictures. In 2026, these models utilize diffusion-transformer architectures to simulate real-world physics, enabling the creation of hyper-realistic videos up to several minutes in length for marketing, entertainment, and personal use.

- ✓ OpenAI’s Sora and Alibaba’s latest models now lead global rankings for visual fidelity and physics simulation.

- ✓ ByteDance’s Dreamina Seedance 2.0 has democratized professional video editing through direct CapCut integration.

- ✓ The 2026 market is shifting toward "Video-to-Video" refinement and real-time collaborative AI editing suites.

- ✓ Strategic acquisitions, such as Reka’s purchase of specialized video startups, are consolidating the specialized AI video market.

To leverage these new ai video generation models effectively in 2026, follow these steps to produce high-quality cinematic content:

- Select Your Model: Choose a platform based on your needs—OpenAI Sora for narrative depth, Alibaba for technical ranking leaderboards, or Dreamina for social media optimization.

- Engineer the Prompt: Define the subject, camera movement (e.g., "dolly zoom"), lighting conditions, and specific artistic style in a detailed text description.

- Set Temporal Parameters: Adjust the frame rate and duration settings; most 2026 models now support native 60fps output for smoother motion.

- Iterate with Seed Control: Use specific "seed" numbers to maintain character consistency across multiple generated clips.

- Post-Production Integration: Export your AI-generated footage into tools like CapCut or Premiere Pro for final color grading and audio syncing.

The Global Race: Leading New AI Video Generation Models of 2026

The first half of 2026 has witnessed a dramatic shift in the global hierarchy of artificial intelligence. According to the Wall Street Journal, Alibaba’s new AI video-generation model has officially topped global rankings as of April 2026, surpassing previous Western benchmarks in terms of prompt adherence and texture realism. This model is particularly noted for its ability to handle complex fluid dynamics and human skin textures, which were historically difficult for generative systems to replicate accurately.

While Alibaba claims the top spot in technical rankings, OpenAI’s Sora remains the industry standard for narrative storytelling. Since its widespread release in February 2026, Sora has introduced features that allow users to generate video from text with a deep understanding of physical properties. According to OpenAI, the model does not just "predict pixels" but simulates a physical world, allowing for realistic interactions between objects, such as a brush leaving actual paint on a canvas or a character’s hair reacting to wind speed.

The competitive landscape is further complicated by the rapid rise of ByteDance’s ecosystem. In February 2026, Reuters reported that ByteDance’s new AI video model went viral globally, marking a "second DeepSeek moment" for the Chinese tech sector. This surge in popularity is driven by the model’s accessibility and its ability to generate culturally nuanced content that resonates with a global audience, proving that the race for AI video supremacy is no longer a one-company show.

Comparison of Top 2026 AI Video Platforms

To help creators choose the right tool for their specific projects, the following table compares the current market leaders based on the latest 2026 technical reviews and releases.

| Model Name | Developer | Key Strength | Primary Integration |

|---|---|---|---|

| Sora | OpenAI | Narrative Consistency & Physics | OpenAI API / Web |

| Dreamina Seedance 2.0 | ByteDance | Social Media & Mobile Editing | CapCut |

| Alibaba Video Gen | Alibaba Cloud | Global Ranking Leader (Fidelity) | Enterprise Cloud Tools |

| Reka Video | Reka AI | Multi-modal Reasoning | Reka Playground |

Integration and Accessibility: From High-End Labs to Your Pocket

One of the most significant trends in 2026 is the movement of new ai video generation models from specialized research environments into everyday consumer applications. ByteDance has been at the forefront of this movement. In March 2026, TechCrunch reported that ByteDance’s latest model, Dreamina Seedance 2.0, was officially integrated into CapCut. This move allows millions of creators to generate and edit AI video directly within their mobile editing workflow, effectively removing the barrier to entry for professional-grade visual effects.

This integration signifies a shift from "stand-alone generation" to "assisted creation." Instead of just generating a random clip, users can now use AI to extend existing footage, change the weather in a scene, or swap outfits on a character with a single tap. The Seedance 2.0 architecture is specifically optimized for mobile processors, ensuring that high-resolution video can be rendered without requiring massive server-side compute power for every minor adjustment.

Furthermore, the accessibility of these tools has sparked a new era of "prosumer" content. According to CNET’s April 2026 review of the best AI video generators, the focus has shifted from "can it make a video?" to "how well does it integrate with my existing tools?" The 2026 winners are those that offer plugins for traditional software, allowing for a hybrid workflow where AI handles the heavy lifting of visual synthesis while humans retain creative control over pacing and emotion.

Market Consolidation and Strategic Shifts

The 2026 AI market is also characterized by rapid consolidation as larger firms acquire specialized startups to bolster their video capabilities. A notable example occurred in May 2026 when The Information reported that Reka, an AI video-app developer, acquired a specialized video-generating startup to enhance its multi-modal capabilities. This acquisition spree suggests that the "foundational" layer of AI video is becoming a winner-takes-most market, where only a few companies possess the compute and data resources to train the most advanced models.

Interestingly, the industry is also seeing unusual cross-company collaborations. In a surprising turn of events in early May 2026, news broke regarding Elon Musk’s xAI providing server resources to Anthropic. While xAI has focused heavily on real-time data integration via X (formerly Twitter), this move suggests a strategic realignment in the industry where compute power is traded as a primary currency to accelerate the development of next-generation video and reasoning models.

These strategic shifts are not just about corporate power; they directly impact the features available to users. When companies like Reka acquire startups, they often integrate niche technologies—such as specialized 3D spatial awareness or advanced lip-syncing algorithms—into their broader new ai video generation models. For the end-user, this means more robust tools that can handle increasingly specific and difficult creative prompts.

Technical Breakthroughs in 2026 Video Synthesis

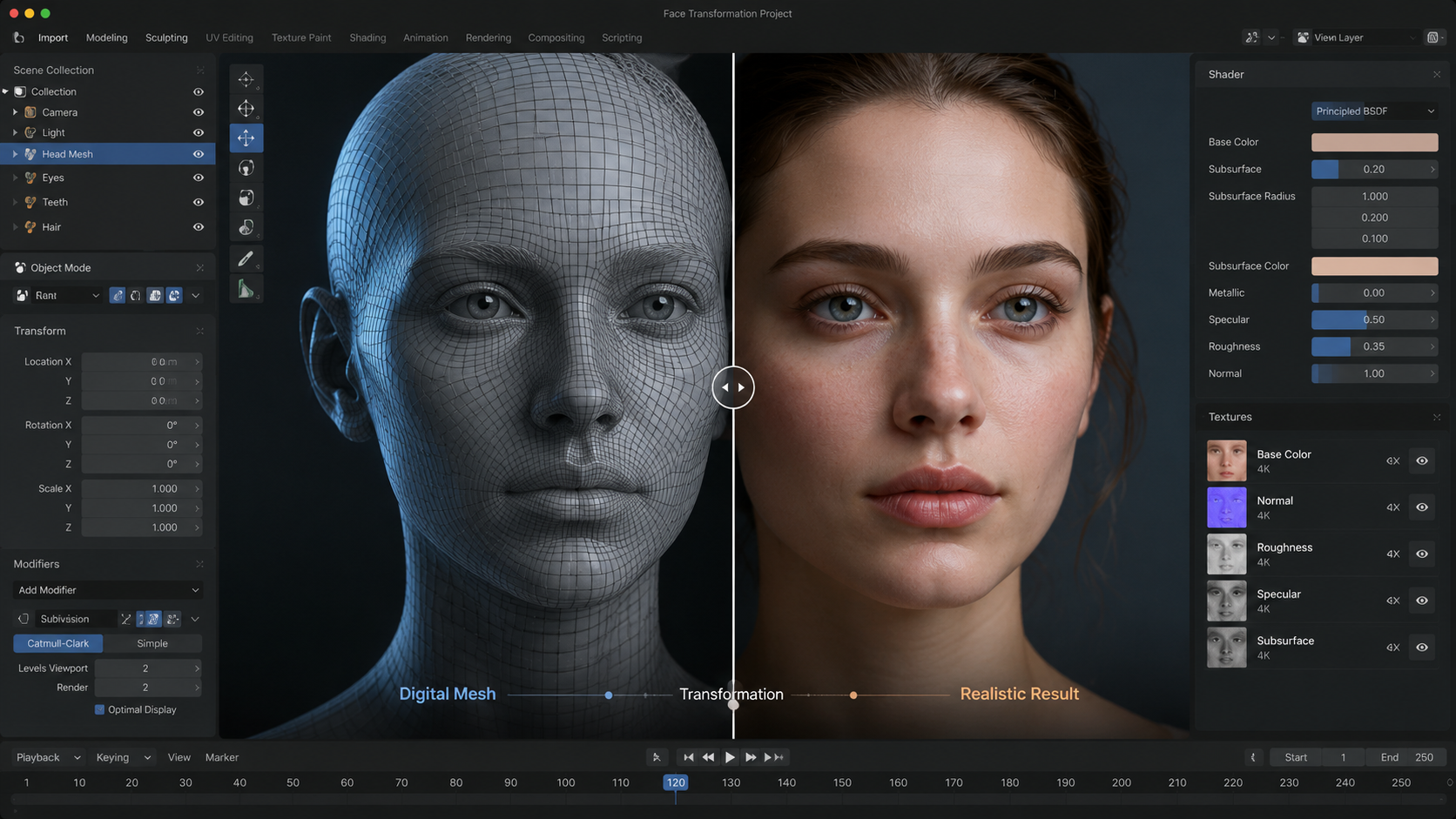

The new ai video generation models of 2026 have solved many of the "hallucination" issues that plagued earlier versions. Two years ago, AI videos often featured warping limbs or inconsistent backgrounds. Today, the introduction of "Temporal Anchor Points" and "Spatial Transformer Blocks" has allowed models to maintain a "memory" of every object in a scene. If a character walks behind a tree in a Sora-generated video, the model remembers exactly what the character looked like when they reappear on the other side.

Another breakthrough is the implementation of native 4K generative output. In previous years, AI video was often generated at low resolutions and then "upscaled," which led to a loss of detail. The 2026 models, particularly the high-ranking Alibaba model, generate at high resolutions natively. This results in sharper edges, more realistic lighting reflections, and a level of detail in textures—such as the weave of a fabric or the pores on a face—that is indistinguishable from traditional cinematography.

Finally, the "Video-to-Video" (V2V) revolution has reached its peak. This technology allows users to take a low-quality video filmed on a smartphone and use a generative model to "re-skin" it into a cinematic masterpiece. You can film a person walking in a backyard and, using new ai video generation models, transform the setting into a futuristic Martian colony while keeping the person's exact movements and expressions intact. This has massive implications for indie filmmakers who can now achieve "blockbuster" visuals on a shoestring budget.

The Future of Video Content: What’s Next for 2027?

As we look toward the end of 2026, the trajectory of new ai video generation models suggests a move toward full-length feature film generation. While current models excel at clips ranging from 60 to 120 seconds, research papers released in late 2026 indicate that "Long-Context Video Transformers" are on the horizon. These will allow for the generation of 10-20 minute segments that maintain consistent plot points and character arcs without human intervention.

We are also seeing the beginning of "Interactive AI Video." Imagine a streaming service where the viewer can influence the direction of the scene in real-time. Because the video is being generated on the fly by powerful AI models, the story can branch in infinite directions based on user input. This convergence of gaming and traditional film is expected to be the "next big thing" as we transition into 2027.

In conclusion, 2026 is the year AI video became "real." Whether it is Alibaba’s top-ranked technical marvels, Sora’s physical simulations, or the viral accessibility of ByteDance’s Dreamina, the tools available today have permanently lowered the barrier to high-end visual storytelling. For creators, the challenge is no longer "how" to make a video, but "what" story is worth telling with these limitless digital tools.

What are the best new AI video generation models in 2026?

As of 2026, the leading models include OpenAI’s Sora for narrative physics, Alibaba’s top-ranked generation model for visual fidelity, and ByteDance’s Dreamina Seedance 2.0 for social media integration. Each offers unique strengths depending on whether you need cinematic realism or ease of use.

Is OpenAI Sora available to the public in 2026?

Yes, Sora was widely released in February 2026. It has since been updated to support longer durations and better physics simulation, making it a primary tool for professional creators and marketing agencies.

How does Dreamina Seedance 2.0 work with CapCut?

Dreamina Seedance 2.0 is natively integrated into the CapCut interface. Users can generate video clips from text prompts directly on the timeline or use AI to enhance and extend existing footage without leaving the app.

Which AI video model is currently ranked #1?

According to reports from April 2026, Alibaba’s new AI video-generation model has taken the top spot in global rankings for its superior ability to render complex textures and adhere to difficult technical prompts.

Can AI video models in 2026 create consistent characters?

Yes, 2026 models utilize advanced seed control and temporal anchoring. This allows creators to maintain the same character appearance, clothing, and features across multiple different video clips, which is essential for storytelling.

Comments ()