AI Video Generation Model Landscape: 2026 Guide

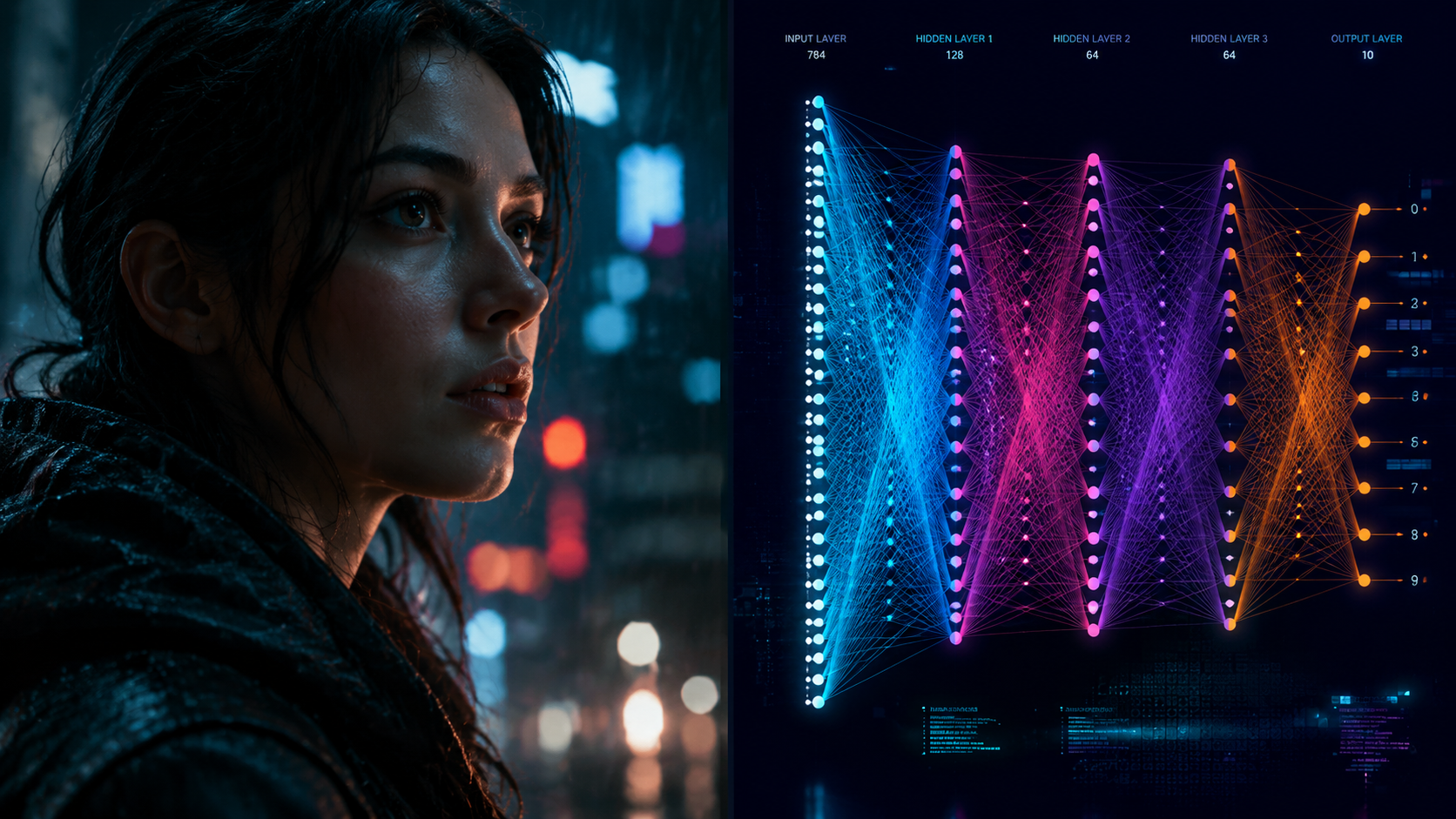

The ai video generation model landscape in 2026 is defined by a shift toward cinematic consistency, long-form coherence, and the integration of video synthesis into enterprise SaaS workflows. As of early 2026, the market has matured beyond simple prompt-to-video clips, moving into a phase where models like Google’s Veo 3.1 and ByteDance’s Seedance 2.0 offer granular character control and professional-grade resolution. Understanding this landscape requires looking at the convergence of big tech infrastructure and the specialized needs of creative industries.

The ai video generation model landscape is a sophisticated ecosystem of generative AI technologies designed to produce high-fidelity video from text, image, or video inputs. In 2026, it is characterized by "cinematic consistency" models that maintain character and environment stability across multiple scenes, primarily led by advancements from Google, ByteDance, and OpenAI.

- ✓ Google’s Veo 3.1 has established a new benchmark for character consistency and cinematic-quality output as of January 2026.

- ✓ ByteDance’s Seedance 2.0 has emerged as a powerful developer-centric model, though it faces ongoing copyright scrutiny.

- ✓ The industry is shifting from standalone "novelty" generators to integrated AI tools within existing SaaS ecosystems.

- ✓ "Model fatigue" is being addressed by OpenAI and others through a pivot toward more powerful, reasoning-capable video architectures.

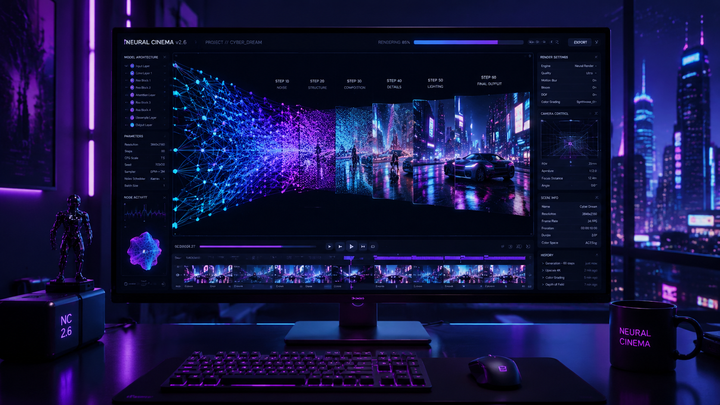

To navigate the current ai video generation model landscape effectively, follow these steps to select and implement the right model for your project:

- Define Consistency Requirements: Determine if your project requires "Temporal Consistency" (smooth motion) or "Character Consistency" (the same face across different shots).

- Select a Model Tier: Choose between high-end cinematic models (Google Veo 3.1), developer-focused APIs (Seedance 2.0), or enterprise-integrated tools within your existing SaaS stack.

- Verify Copyright Compliance: Review the training data transparency of the model, especially following the 2026 legal scrutiny surrounding ByteDance’s latest releases.

- Execute Multi-Modal Prompting: Use a combination of text descriptions and reference images to guide the model’s spatial reasoning.

- Iterate via Seed Control: Use specific seed numbers to maintain the same visual style while adjusting camera angles or lighting.

The Evolution of the AI Video Generation Model Landscape

The trajectory of video synthesis has moved at a breakneck pace over the last twelve months. In the early months of 2026, we have seen a pivot away from the short, "dreamlike" clips of previous years toward structured, narrative-driven content. According to Big Technology, the initial appeal of simple video AI is beginning to wane among general consumers, prompting a strategic shift among major players like OpenAI. These companies are now focusing on "Reasoning Models" that understand the physics of the world rather than just predicting pixel movements.

This evolution is most evident in how models handle human movement and environmental persistence. In 2025, Google’s Veo 3 pushed the boundaries of what was possible in AI technology, but the January 2026 release of Veo 3.1 represented what many experts call a "paradigm shift." By solving the problem of character consistency, the landscape has moved from a tool for social media memes to a legitimate asset for pre-visualization in Hollywood and high-end advertising.

The Rise of Cinematic Consistency

The core challenge of the ai video generation model landscape has always been "jitter"—the loss of detail or identity between frames. The 2026 generation of models uses sophisticated latent space anchoring to ensure that a character’s features do not morph during a 60-second clip. This breakthrough has allowed for the creation of short films that look as though they were shot on a physical set with a consistent cast.

Integration into SaaS Ecosystems

Another major trend in 2026 is the "invisible" nature of AI video. Rather than visiting a specific website to generate a video, users are finding these capabilities embedded directly into their project management and design tools. SaaS companies are embracing AI integration to streamline workflows, allowing marketing teams to generate promotional videos directly from a product brief without leaving their primary workspace.

Major Players and Model Comparisons in 2026

The competitive field in 2026 is dominated by three distinct archetypes: the Tech Giants (Google, ByteDance), the Specialized Innovators (OpenAI), and the Open-Source/Developer-centric platforms. Each brings a different philosophy to the ai video generation model landscape. For instance, ByteDance’s Seedance 2.0, released in March 2026, focuses on developer flexibility and high-speed rendering, making it a favorite for app developers looking to integrate video features.

However, with great power comes significant legal challenges. ByteDance’s debut of Seedance 2.0 was met with immediate copyright scrutiny. Reports from ecns.cn indicate that the model's ability to replicate specific artistic styles and potential use of copyrighted training data has sparked a new wave of debates regarding intellectual property in the generative era. This highlights a critical divide in the landscape: models that prioritize "creative freedom" versus those that prioritize "legal safety."

| Model Name | Developer | Release Date | Key Strength | Primary Use Case |

|---|---|---|---|---|

| Veo 3.1 | January 2026 | Character Consistency | Cinematic & Narrative Film | |

| Seedance 2.0 | ByteDance | March 2026 | Developer API/Speed | App Integration & Viral Content |

| Sora (2026 Update) | OpenAI | April 2026 | Physical Reasoning | Complex Action Sequences |

| VEO 3 | September 2025 | High Fidelity | General Content Creation |

Technological Breakthroughs: Veo 3.1 and Seedance 2.0

The launch of Google’s Veo 3.1 in January 2026 marked a significant milestone in the ai video generation model landscape. Unlike its predecessors, Veo 3.1 utilizes a proprietary "Cinematic Anchor" technology. According to a report by FinancialContent, this model provides a paradigm shift in how AI handles character consistency, allowing creators to keep the same protagonist across different environments and lighting conditions without the "hallucinations" common in 2024 or 2025 models.

On the other side of the spectrum, ByteDance’s Seedance 2.0 has become the gold standard for developers. As detailed in the SitePoint Developer Guide, Seedance 2.0 offers a more robust API that allows for real-time video manipulation. This model is particularly effective at "Style Transfer," where a user can take a low-quality mobile video and transform it into a high-end animation or a hyper-realistic cinematic scene in seconds. However, the viral nature of these videos has led to the aforementioned copyright concerns, as the model is exceptionally good at mimicking the "look and feel" of existing cinematic franchises.

Hardware Requirements and Cloud Rendering

The computational demands of these 2026 models have necessitated a shift in how they are accessed. While 2025 saw some local execution of smaller models, the 2026 landscape is almost entirely cloud-based due to the sheer size of the parameters required for temporal consistency. High-end rendering now requires specialized H200 or B200 GPU clusters, which are typically managed by the model providers themselves, offering "Rendering-as-a-Service" (RaaS) models.

The Role of Prompt Engineering in 2026

Prompting has evolved from simple sentences to "Director’s Notes." In the current ai video generation model landscape, professional users utilize structured data—including camera focal lengths, lighting temperatures in Kelvin, and specific movement vectors—to guide the AI. This level of control is what separates the "prosumer" tools from the enterprise-grade models like Veo 3.1.

Ethical Considerations and Copyright Scrutiny

As we move through 2026, the ethical dimensions of the ai video generation model landscape have become as important as the technical ones. The Washington City Paper recently highlighted the proliferation of "NSFW" AI generators, noting that while mainstream models from Google and OpenAI have strict safety layers, a secondary market of unregulated models is flourishing. This has forced the industry to consider more rigorous watermarking and provenance tracking.

Copyright remains the most contentious issue. According to ecns.cn, ByteDance’s new model faced immediate backlash from artist guilds who claim the model’s "viral debut" was only possible through the unauthorized ingestion of modern cinematic works. This has led to the development of "Clean-Room Models," which are trained exclusively on licensed or public-domain footage. For enterprise users, choosing a model from the 2026 landscape often comes down to the indemnity clauses provided by the developer.

The Impact of "Watermark 2.0"

In response to these concerns, most major models in the 2026 landscape now include "invisible" metadata. This technology, often referred to as Watermark 2.0, survives video compression and re-encoding, allowing platforms to identify AI-generated content instantly. This is a critical feature for news organizations and social media platforms looking to combat deepfakes and misinformation.

The Shift Toward "Ethical Data" Sourcing

Many companies are now advertising their "Ethical Score" alongside their model performance. This score reflects the transparency of their training sets. In 2026, a model's position in the ai video generation model landscape is increasingly tied to its reputation for respecting intellectual property, with some companies even offering royalty-sharing programs for artists whose work is used in training fine-tuned versions of the models.

Future Outlook: The Road Beyond 2026

Looking toward the end of 2026 and into 2027, the ai video generation model landscape is expected to merge with interactive media. We are already seeing the first "Infinite Video" models that allow users to navigate through a generated scene in real-time, effectively blurring the line between a video and a video game. OpenAI’s shift toward powerful reasoning models suggests that the next generation of video AI will not just "draw" a scene, but will "simulate" it, understanding the weight of objects and the fluidity of liquids with perfect accuracy.

Furthermore, the "waning appeal" of standalone video AI mentioned by Ranjan Roy suggests that the future lies in personalization. Instead of a one-size-fits-all model, we are moving toward "Personal Video Models" (PVMs) that are fine-tuned on a specific user's brand identity, color palette, and past successful content. This level of hyper-customization will likely be the defining feature of the 2027 landscape.

The Convergence of VR and AI Video

As VR and AR hardware becomes more mainstream in 2026, video generation models are being optimized for stereoscopic 3D output. This allows for the instant generation of 360-degree environments, which is revolutionizing training, education, and immersive storytelling. The landscape is no longer flat; it is becoming a three-dimensional space that users can inhabit.

Conclusion on the 2026 Landscape

The ai video generation model landscape of 2026 is a testament to the power of rapid technological iteration. From the cinematic heights of Google Veo 3.1 to the developer-friendly flexibility of Seedance 2.0, the tools available today are capable of producing content that was indistinguishable from reality just years ago. As the industry grapples with copyright and the shift toward integrated SaaS solutions, the focus remains on creating tools that are not just powerful, but consistent, ethical, and deeply integrated into the creative workflow.

What is the best AI video generator for character consistency in 2026?

Google's Veo 3.1 is currently considered the industry leader for character consistency. Released in January 2026, it introduced a paradigm shift in cinematic AI by allowing characters to maintain their visual identity across multiple scenes and lighting conditions.

How has ByteDance's Seedance 2.0 changed the landscape?

Seedance 2.0 has provided a high-speed, developer-friendly API that makes it easier to integrate AI video into third-party applications. However, it has also brought copyright scrutiny to the forefront of the ai video generation model landscape due to its ability to mimic viral styles.

Are AI video models being integrated into SaaS tools?

Yes, in 2026, most major SaaS companies have embraced AI integration. This allows users to generate and edit video content directly within their existing marketing, design, and project management platforms rather than using standalone AI tools.

Why is OpenAI shifting its focus in the video space?

According to industry analysts, OpenAI is shifting focus toward more powerful reasoning models as the initial novelty of simple video generation fades. These models aim to understand physical laws and complex narratives rather than just generating short, visual clips.

What are the legal risks of using AI video models in 2026?

The primary legal risks involve copyright infringement and intellectual property rights. Many newer models, such as ByteDance's 2026 releases, have faced scrutiny over their training data, making it essential for users to check the "clean-room" status of a model before commercial use.

Comments ()