AI Image Test 2026: How to Spot Fakes and Grade Generators

As we navigate the mid-point of 2026, the boundary between synthetic media and reality has become almost imperceptible. Conducting a rigorous AI image test is no longer just a hobby for tech enthusiasts; it is a critical skill for consumers, journalists, and digital platforms alike. With the advent of mobile-integrated generative tools like the Honor 600 series and the quiet integration of AI fashion models on major e-commerce platforms like eBay, the sheer volume of AI-generated content has reached an all-time high. Understanding how these images are created, tested, and detected is essential for maintaining digital literacy in an era where seeing is no longer necessarily believing.

An AI image test is a standardized evaluation used to determine the quality, realism, and safety of synthetic visuals. In 2026, these tests focus on identifying anatomical errors, checking for hidden toxic text in memes, and using specialized detection software to differentiate between human-captured photography and generative outputs from models like Midjourney or DALL-E.

- ✓ Modern AI image tests now include safety benchmarks for hidden toxic text and harmful embedded metadata.

- ✓ Physical artifacts like "floating" hair and mismatched earrings remain key indicators of AI generation.

- ✓ Major retailers are now testing AI-generated fashion models to replace traditional photography.

- ✓ New mobile processors are capable of instant Image-to-Video 2.0 transformations, blurring the lines of traditional media.

The Evolution of the AI Image Test in 2026

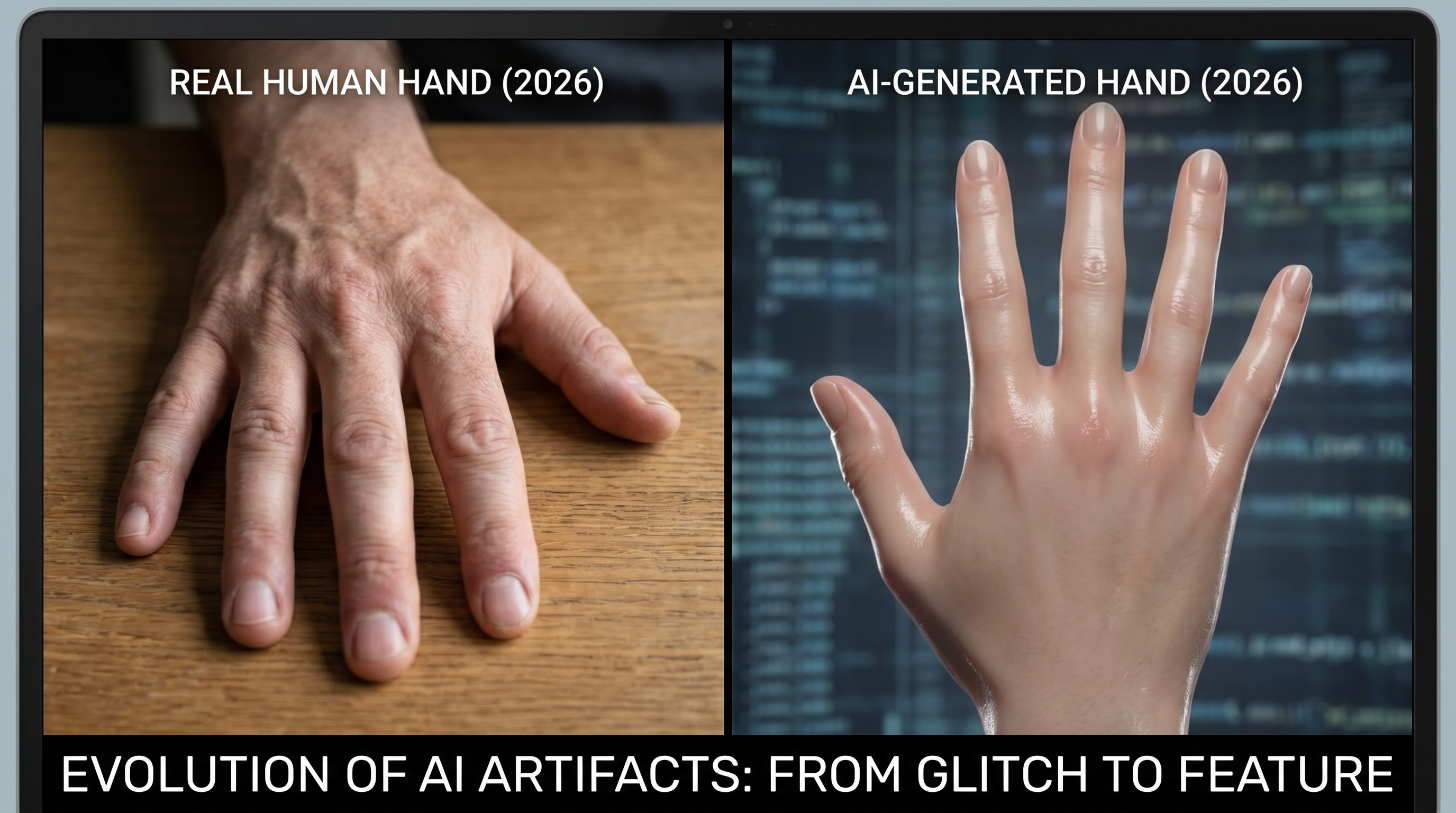

The criteria for a successful AI image test have shifted dramatically over the last twelve months. In early 2025, we were still looking for basic errors like merged fingers or nonsensical backgrounds. However, as we move through 2026, the focus has pivoted toward "vibe checks" and microscopic consistency. According to a recent 2026 review by The Washington Post, today’s top-tier AIs are now capable of complex tasks such as giving a bald celebrity a full head of realistic hair or seamlessly deleting an ex from a photo while reconstructing the background with perfect lighting. Despite these leaps, even the most advanced models occasionally fail when asked to render precise text or specific anatomical details under high-stress prompts.

Furthermore, the industry has introduced new safety-centric testing protocols. As reported by MSN in April 2026, AI image generators are now subjected to a new safety test specifically designed to detect hidden toxic text within memes. This is a response to the rise of "stealth prompts" where harmful messages were being embedded into the latent space of an image, invisible to the naked eye but readable by other algorithms. This new layer of testing ensures that generative tools are not just creative, but also compliant with global safety standards.

The Rise of AI Fashion Models and Commercial Testing

One of the most significant shifts in the commercial application of AI has been seen in the retail sector. According to Value Added Resource, eBay has been quietly testing AI fashion models, inserting altered images into seller listings. This move has sparked a debate over consent and transparency, as many sellers found their products displayed on synthetic humans without prior notification. This real-world AI image test highlights the tension between cost-cutting automation and the need for authentic representation in e-commerce.

7 Clues to Pass Your Own AI Image Test

Even as technology improves, human intuition remains a powerful tool. In March 2026, PCMag identified seven specific clues that help users spot fake images immediately. While the "six-finger" trope is becoming a thing of the past, newer models still struggle with the physics of light and shadow. When performing your own AI image test, look closely at the reflection in a subject's eyes. AI often fails to create a consistent light source across both pupils, resulting in mismatched catchlights that look uncanny upon close inspection.

Another major tell is the "texture smoothing" effect. Many AI models tend to over-process skin, leading to a porcelain-like finish that lacks natural pores, fine hairs, or minor blemishes. According to PCMag, checking the edges where a subject meets the background is also vital. In AI-generated images, there is often a slight "halo" or a blurring effect where the algorithm struggled to define the boundary between the foreground and the environment. These subtle imperfections are the breadcrumbs that lead to the truth in 2026.

Testing for Consistency in Complex Scenes

Advanced testing now involves checking for logical consistency in complex environments. For example, if an image features a person wearing glasses, check the reflection in the lenses. Does it match the scene behind the viewer? Often, an AI will generate a beautiful landscape in the background but fail to reflect that same landscape accurately in metallic or glass surfaces within the frame. This lack of global environmental awareness is a primary failure point in current generative models.

The Role of Detection Tools and Their Efficacy

As the complexity of generative art grows, so does the market for AI detection tools. However, their reliability is a subject of intense scrutiny. The New York Times recently evaluated several of these tools in February 2026, asking the question: "Do they really work?" The results were mixed. While some detectors are excellent at identifying patterns in the noise distribution of an image—patterns invisible to humans—they often return false positives for heavily edited traditional photographs. This makes the AI image test a collaborative effort between software and human oversight.

| Test Category | Manual Detection Clue | AI Software Metric | 2026 Success Rate |

|---|---|---|---|

| Anatomy | Check for mismatched earrings/teeth | Geometric consistency check | High |

| Lighting | Inconsistent shadows/reflections | Ray-tracing analysis | Moderate |

| Text/Memes | Garbled or hidden characters | OCR and Sentiment Analysis | High |

| Skin Texture | Lack of pores or micro-blemishes | Frequency domain analysis | Low (AI is improving) |

The table above illustrates that while software is highly effective at catching anatomical errors, it still struggles with the high-level artistry of skin texture, which has become a hallmark of 2026's "Hyper-Realism" update in major generators. This is why a multi-factor AI image test is necessary for any high-stakes verification process.

Mobile Integration: The Honor 600 and AI Image to Video 2.0

The 2026 landscape is not just about static images; it is about the transition from stills to motion. A recent test by Tom's Guide on the Honor 600 series revealed the power of "AI Image to Video 2.0." This technology allows users to take a standard photo and, through a simple on-device AI image test, transform it into a cinematic sequence. The reviewer noted that it felt like having "Toy Story in your pocket," as the AI accurately predicted how a still object should move in 3D space.

This advancement poses new challenges for verification. If a photo can be turned into a video instantly, the criteria for what constitutes a "photo" are being rewritten. The Honor 600's ability to generate these videos in real-time shows that the processing power for high-end AI is moving away from the cloud and directly into our pockets. This decentralization makes it harder to track the provenance of an image, making independent testing tools more important than ever.

How to Conduct a Professional AI Image Test

If you are a professional looking to verify content, your AI image test should follow a structured protocol. First, utilize a reverse image search to see if the image has a history or if it appears to be a unique, synthesized creation. Second, run the file through a metadata scrubber. According to research from The New York Times, many AI generators now embed "invisible watermarks" or specific metadata tags that identify them as synthetic, though these can be stripped by sophisticated actors.

Third, apply a "stress test" to the image's logic. Ask yourself: Does the clothing fabric react naturally to the wind direction shown in the background? Does the jewelry align with the anatomy of the ear or neck? If the image is a meme, use one of the new safety tools mentioned by MSN to ensure there is no hidden toxic text. By combining these steps, you can achieve a high degree of confidence in your results.

Can AI image detectors be 100% accurate?

No, as of 2026, no detector is 100% accurate. They often struggle with "hybrid" images that combine real photography with AI-generated elements or heavily filtered photos.

What is the most common mistake in AI images in 2026?

The most common mistake currently involves "environmental logic," such as reflections in water or glass that do not match the surrounding objects or lighting sources.

How does the eBay AI fashion model test affect buyers?

It can make it difficult for buyers to judge how a garment actually fits a human body, as the AI models are often perfectly proportioned and do not show how fabric drapes in reality.

What is the new safety test for memes?

It is an algorithmic check that scans the pixel layers of a meme for text that is hidden from the human eye but intended to trigger AI-driven content moderation or spread harmful messages.

Is Image-to-Video 2.0 available on all phones?

No, it currently requires high-end NPU (Neural Processing Unit) hardware, such as that found in the 2026 Honor 600 series and other flagship devices.

Conclusion: The Future of Visual Trust

The year 2026 has proven that the AI image test is an evolving target. As generators become more adept at mimicking the nuances of reality, our methods for testing them must become more sophisticated. Whether it is a major publication like The Washington Post finding a "clear winner" among top AIs or a consumer checking a listing on eBay, the need for transparency is paramount. By staying informed about the latest clues—from mismatched catchlights to hidden toxic text—we can navigate this synthetic landscape with confidence and clarity.

Comments ()