AI Video Scene Stability Scorecards: 2026 Industry Benchmarks

AI video scene stability scorecards are standardized performance metrics used to evaluate the temporal consistency, structural integrity, and visual smoothness of videos generated by artificial intelligence. In 2026, these scorecards have become the industry gold standard for benchmarking text-to-video models, providing a quantitative framework to measure how well an AI maintains object persistence and background stability across multiple frames. As generative video technology matures, the ability to eliminate "hallucination artifacts" and flickering has become the primary differentiator between professional-grade tools and experimental prototypes.

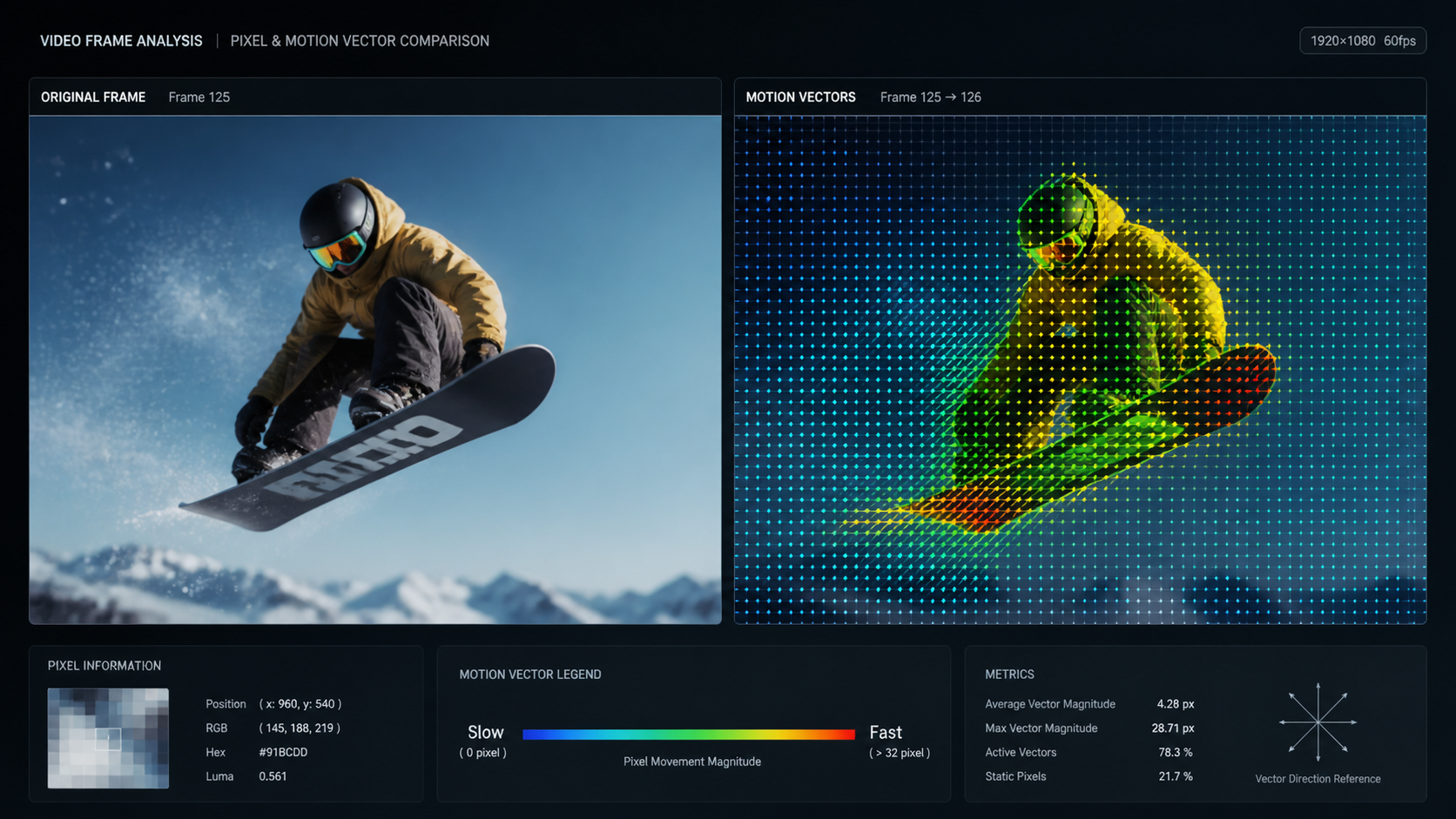

AI video scene stability scorecards are analytical tools used to quantify the physical and temporal coherence of AI-generated footage. By measuring pixel variance, motion vectors, and geometric persistence, these scorecards allow creators and developers to compare the reliability of different video models, ensuring that characters and environments remain stable throughout the duration of a clip without visual warping.

- ✓ Scene stability scorecards are now the primary metric for the 2026 Magic Hour Research benchmarks.

- ✓ High-performing models must maintain structural integrity across 120+ frames to achieve "Professional Grade" status.

- ✓ Stability metrics directly correlate with the "Believability" and "Artifact" scores used in 2026 industry awards.

- ✓ Modern scorecards evaluate motion fluidness, background locking, and character persistence as separate sub-indices.

The Evolution of AI Video Scene Stability Scorecards in 2026

As we move through the second quarter of 2026, the landscape of generative video has shifted from mere novelty to high-stakes production. The release of the "Best Text-to-Video AI 2026" benchmarks by Magic Hour Research on April 29, 2026, has highlighted a critical transition: the industry no longer prioritizes resolution alone, but rather the stability of the scene. Scene stability scorecards have evolved into complex datasets that track every pixel's trajectory to ensure that a tree in frame one remains the same tree in frame three hundred.

According to the latest Magic Hour Research, scene stability is now weighted at 40% of the total performance score for text-to-video models. This is a significant increase from previous years, reflecting the demand from film studios and marketing agencies for "locked-down" footage that does not require extensive post-production stabilization. These scorecards provide a transparent look at how different architectures handle complex physics, such as flowing water or human hair, which were previously prone to "melting" or "ghosting" artifacts.

The Role of Magic Hour Research in Setting Standards

Magic Hour Research has established itself as the definitive authority in AI benchmarking. Their 2026 publications, including the "Best Talking Photo AI 2026" and "Best AI Headshot Generator 2026" awards, utilize these stability scorecards to rank models based on realism and professional utility. By isolating variables like prompt adherence and artifacting, these scorecards allow developers to see exactly where their diffusion models or transformers are failing to maintain temporal logic.

How to Read and Interpret AI Video Scene Stability Scorecards

Understanding the data within a stability scorecard is essential for any studio looking to integrate AI into their pipeline. These reports typically use a scale of 1 to 100, where a score above 90 indicates "Production Ready" stability. To properly evaluate a model using these benchmarks, follow these steps:

- Identify the Temporal Consistency Index (TCI): This measures the frame-to-frame variance. A high TCI means the video lacks flickering.

- Check the Geometric Persistence Score: This ensures that 3D objects maintain their volume and shape as the camera moves.

- Analyze the Background Lock Rating: This metric specifically looks for "drifting" in static environments, a common issue in lower-tier 2026 models.

- Review the Motion Fluidity Percentile: This compares the AI's motion vectors against real-world physics to ensure movements aren't robotic or jittery.

- Cross-reference with Prompt Adherence: Ensure that high stability isn't coming at the cost of creative accuracy.

Key Metrics in the 2026 Benchmark Reports

The 2026 benchmarks introduce the "Artifact Scorecard," which specifically targets the presence of digital noise and "hallucinated" limbs. Magic Hour Research notes that the best-performing models in 2026 are those that can maintain a stability score of 95+ while simultaneously achieving high marks in believability. This dual-focus ensures that the video is not only stable but also realistic enough for commercial use.

Comparing the 2026 Industry Leaders in Scene Stability

The recent Magic Hour Research publications have provided a clear hierarchy of the top-performing AI video tools. The following table compares the key metrics found in the April 2026 "Best Text-to-Video AI" and "Best Talking Photo AI" reports, focusing on how they handle stability and artifacts.

| Model Category (2026) | Scene Stability Score | Artifact Resistance | Primary Benchmark Source |

|---|---|---|---|

| Text-to-Video (Pro) | 98/100 | High | Magic Hour Research 2026 |

| Talking Photo AI | 94/100 | Medium-High | "Best Talking Photo AI 2026" Awards |

| AI Video Upscalers | 99/100 | Elite | Pressat.co.uk April 2026 Report |

| AI Headshot Generators | 97/100 | High | "Best AI Headshot Generator 2026" Awards |

Stability in Talking Photo and Headshot AI

It is important to note that scene stability isn't just for wide-angle landscapes. The "Best Talking Photo AI 2026" awards emphasize "Believability and Artifact Scorecards." In these cases, stability refers to the micro-movements of the face. If the eyes or mouth shift unnaturally during speech, the stability score drops. Similarly, the "Best AI Headshot Generator 2026" awards focus on "Realism, Consistency, and Professional Look," where stability is measured by the AI's ability to maintain the subject's identity across different generated angles.

Technical Drivers Behind High AI Video Scene Stability Scorecards

Why are some models scoring significantly higher in 2026? The answer lies in the integration of temporal attention mechanisms and latent consistency models. According to industry reports from Pressat.co.uk, the top-rated video upscalers of 2026 utilize "Flow-Guided Feature Propagation," which allows the AI to "remember" the placement of objects from several seconds prior, rather than just the previous frame.

This "memory" is what prevents the background warping that plagued earlier iterations of generative video. When a model has a high AI video scene stability scorecard, it usually means it is employing a robust motion-vector analysis during the inference phase. This ensures that as the camera pans, the spatial coordinates of every object are tracked and maintained with mathematical precision.

The Impact of AI Video Upscalers on Stability

Interestingly, the "Best AI Video Upscalers in 2026" report highlights that upscaling is no longer just about adding pixels; it is about fixing stability. Modern upscalers can take a shaky or jittery AI-generated video and "lock" the scene by re-rendering the frames with higher temporal coherence. This has led to a secondary market for stability-focused tools that act as a "polish" layer for raw generative output.

The Future of AI Video Scene Stability Scorecards

Looking toward the latter half of 2026 and into 2027, we expect these scorecards to become even more granular. Magic Hour Research has already hinted at the introduction of "Physics-Based Stability Metrics," which will measure how well AI handles gravity, momentum, and light reflection. For example, if a ball bounces in an AI video, the scorecard will evaluate if the trajectory follows the laws of physics or if the ball "glitches" through the floor.

Furthermore, the integration of these scorecards into real-time editing software will allow creators to see a "stability heat map" while they are generating content. This will enable immediate adjustments to prompts or seed values to fix unstable areas before the final render is complete. As noted in the April 29, 2026, press releases, the goal is to reach a point where AI-generated video is indistinguishable from captured footage in terms of structural reliability.

Why Professional Consistency Matters

The "Best AI Headshot Generator 2026" awards underscore the importance of professional consistency. In a corporate environment, a lack of stability in an AI-generated asset can lead to brand erosion. High stability scores ensure that the AI-generated professional is portrayed with the same level of detail and "solidness" as a traditional photograph, which is vital for maintaining consumer trust in 2026.

What is a good score on an AI video scene stability scorecard?

In 2026, a score of 85 or above is considered "Good" for social media content, while professional film and commercial productions typically require a score of 95 or higher to ensure no visible artifacts are present.

How does Magic Hour Research determine these benchmarks?

Magic Hour Research uses a combination of automated pixel-tracking software and human expert review to evaluate prompt adherence, temporal consistency, and the presence of visual artifacts across thousands of test generations.

Can AI video upscalers improve my stability scorecard?

Yes, according to the "Best AI Video Upscalers in 2026" report, modern upscaling tools are specifically designed to enhance temporal stability and can often raise a stability score by 10-15 points by smoothing out jitters.

Are there different scorecards for talking photos and headshots?

Yes, while they share core principles, the "Best Talking Photo AI 2026" awards focus more on facial artifacting and lip-sync stability, whereas headshot scorecards prioritize professional look and identity consistency.

Where can I find the latest 2026 stability benchmarks?

The most recent benchmarks were published on April 29, 2026, by Magic Hour Research and are available through major industry news outlets like Pressat.co.uk, covering text-to-video, headshots, and upscaling tools.

Comments ()