AI Video Prompt Adherence Guide: 2026 Mastering Techniques

An ai video prompt adherence guide is a strategic framework used by digital creators to ensure that generative AI models precisely follow textual instructions regarding cinematography, character consistency, and physics. To master prompt adherence in 2026, creators must balance descriptive linguistic triggers with technical parameters like seed control and motion buckets. This guide provides the definitive methodology for reducing hallucinations and achieving pixel-perfect alignment with your creative vision using the latest 2026 model architectures.

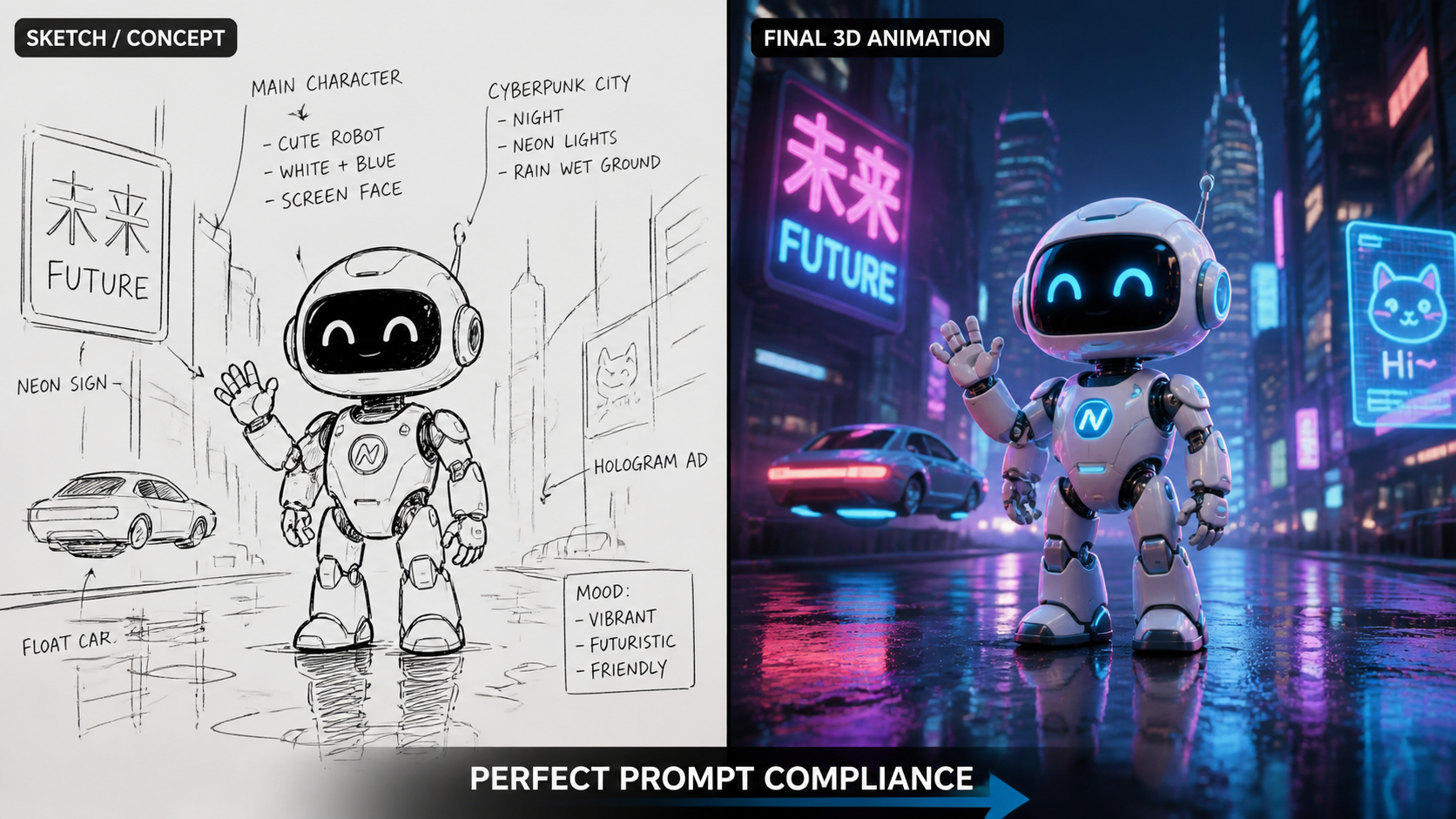

AI video prompt adherence is the measure of how accurately a generative video model translates specific text instructions into visual output. In 2026, achieving high adherence requires using "Spatial-Temporal Prompting," where creators define the environment, movement, and lighting in structured sequences to minimize the AI's creative deviations from the intended script.

- ✓ Utilize "weighted sequencing" to prioritize character details over background elements.

- ✓ Leverage Seedance 2.0’s new developer hooks for frame-by-frame consistency.

- ✓ Implement compliance-safe prompting to avoid redlining in professional LinkedIn workflows.

- ✓ Apply the "Sora 2026 Physics Check" to ensure realistic text-to-video gravity and fluid dynamics.

The Fundamentals of an AI Video Prompt Adherence Guide

In the rapidly evolving landscape of 2026, prompt adherence has moved beyond simple keyword matching. It now encompasses the model's ability to understand complex causal relationships within a scene. Whether you are using OpenAI’s Sora or ByteDance’s Seedance 2.0, the primary challenge remains the same: ensuring the "latent space" of the AI does not fill in gaps with unwanted artifacts. An effective ai video prompt adherence guide focuses on eliminating ambiguity by providing the model with a clear hierarchical structure of information.

According to OpenAI's 2026 documentation for Sora, the model now processes "temporal tokens" more efficiently, allowing for longer video durations without losing the original prompt's context. This means that a prompt written at the start of a 60-second clip must be reinforced through specific "anchor terms" to maintain adherence. Creators who fail to use these anchors often see "style drift," where the lighting or character appearance shifts mid-render. Mastering adherence is about building a linguistic cage that keeps the AI’s creativity focused exactly where you want it.

Step-by-Step Methodology for Maximum Adherence

- Define the Core Entity: Start with a precise description of the subject (e.g., "A 35-year-old female architect with a sharp bob cut").

- Set the Environmental Parameters: Describe the 3D space, including depth of field and specific lighting sources (e.g., "Golden hour sunlight streaming through floor-to-ceiling glass windows").

- Specify the Action Vector: Use active verbs to define movement (e.g., "Walking slowly toward the camera while holding a holographic blueprint").

- Apply Technical Constraints: Include camera settings and frame rates (e.g., "Shot on 35mm lens, f/1.8, 24fps cinematic aesthetic").

- Review and Iterate via Seed Control: If the output deviates, lock the seed number and adjust only the specific words causing the adherence failure.

Comparison of 2026 Leading Video Generation Models

Choosing the right tool is the first step in following any ai video prompt adherence guide. Different models have different "semantic sensitivities." For instance, Seedance 2.0, released in March 2026, excels at developer-level control and technical comparisons, whereas Sora remains the gold standard for cinematic realism and fluid physics. Understanding these nuances allows creators to tailor their prompts to the specific strengths of the underlying neural network.

As noted by SitePoint in their March 2026 Developer Guide, Seedance 2.0 introduced a "Comparison Layer" that allows creators to see how slight variations in prompt adjectives affect the final render in real-time. This level of granularity is essential for high-stakes projects where brand guidelines are non-negotiable. Meanwhile, tools highlighted by Simplilearn emphasize the pricing-to-performance ratio, noting that adherence often improves with higher-tier compute allocations which allow for more "diffusion steps" during the generation process.

| AI Model (2026) | Core Strength | Adherence Level | Primary Use Case |

|---|---|---|---|

| OpenAI Sora | Physics & Realism | Very High | Cinematic Storytelling |

| Seedance 2.0 | Developer Control | Extreme | Technical & Instructional Video |

| LinkedIn Compliance AI | Safety & Brand Logic | High | Professional B2B Content |

| Vocal.media Top 3 Picks | Creative Versatility | Moderate/High | Social Media & Vlogging |

Advanced Techniques for 2026 Prompt Engineering

To truly master an ai video prompt adherence guide, one must understand the concept of "Prompt Weighting" and "Negative Constraints." In 2026, advanced models allow you to assign numerical values to specific words. For example, if the model keeps ignoring a specific color, you might write "(crimson red:1.5)" to force the attention mechanism of the transformer to prioritize that token. This prevents the AI from defaulting to more common, generic colors found in its training data.

Furthermore, the use of "Negative Prompts" has become more sophisticated. Instead of just saying "no blur," creators now use "exclusionary zones" to tell the AI which parts of the frame should remain static. According to Nerdbot’s 2026 Practical Comparison for Creators, the most successful AI videographers are those who treat their prompts like a director’s script combined with a coder’s logic. This hybrid approach ensures that the "hallucination rate"—the frequency at which the AI adds unrequested elements—is kept below 5%.

The Role of Compliance in Professional Prompting

A significant development in 2026 is the integration of compliance-focused prompting. As Investopedia reported in February 2026, financial institutions and legal firms are now using specific AI prompts to create LinkedIn posts that their compliance teams won't redline. This requires a specialized ai video prompt adherence guide that focuses on "safe-space parameters." By including specific "legal-guardrail" tokens in the prompt, the AI automatically avoids generating imagery or text that could be interpreted as financial advice or a regulatory violation.

Optimizing Spatial-Temporal Consistency

One of the hardest parts of video generation is maintaining consistency over time. If a character is wearing a blue hat in the first second, they must still have that hat in the tenth second. In 2026, the industry has moved toward "Reference-Based Adherence." This involves feeding the AI a static image of the character or setting alongside the text prompt. The model then uses the image as a "spatial anchor" while the text prompt dictates the "temporal action."

Research from Simplilearn suggests that using reference images increases prompt adherence by up to 40% compared to text-only prompts. This is particularly useful for commercial work where product accuracy is paramount. In your ai video prompt adherence guide, always recommend the "Image+Text" workflow for any project requiring more than five seconds of footage. This hybrid method leverages the model's visual recognition capabilities to reinforce its linguistic understanding, resulting in a much more stable final video.

Mastering Motion Buckets and Camera Control

Modern 2026 models like Seedance 2.0 utilize "Motion Buckets"—a setting that scales the intensity of movement from 1 to 10. If your prompt describes a "gentle breeze" but your motion bucket is set to 10, the AI will likely ignore the "gentle" part of your prompt and create a hurricane. Adherence is not just about the words; it is about aligning the technical metadata with the descriptive language. A successful ai video prompt adherence guide emphasizes the synchronization of these two layers to prevent visual contradictions.

Future-Proofing Your AI Video Workflow

As we look toward the latter half of 2026 and into 2027, the concept of "Interactive Prompting" is beginning to take hold. This allows creators to pause a generation mid-way and provide "correction prompts" to steer the adherence back on track. This real-time feedback loop is revolutionary, as it moves away from the "lottery style" of generation where you hope the result is good, toward a "sculpting style" where you mold the video as it emerges from the latent space.

According to the 2026 Field Guide published by Vocal.media, the top three AI video generators are now prioritizing "User Intent Analysis." This means the AI is getting better at guessing what you mean even if your prompt is slightly flawed. However, relying on the AI's "best guess" is a recipe for mediocrity. To stand out as a professional creator, you must maintain rigorous standards for your ai video prompt adherence guide, ensuring that every frame is a deliberate choice rather than a statistical accident.

What is the best way to improve ai video prompt adherence?

The most effective way to improve adherence is to use a hierarchical prompting structure, starting with the broad scene and narrowing down to specific technical details and motion scales. Additionally, using reference images (Image-to-Video) provides a visual anchor that significantly reduces model hallucinations.

How does Seedance 2.0 differ from Sora in terms of adherence?

Seedance 2.0 offers more granular developer hooks and comparison tools, making it ideal for technical accuracy and iterative testing. Sora, while highly adherent to physical laws and cinematic beauty, is often viewed as a more "creative" partner that excels in fluid, realistic storytelling.

Can I use AI video prompts for professional LinkedIn content?

Yes, as of 2026, there are specific prompt structures designed to pass corporate compliance. By using "guardrail tokens" and following a professional ai video prompt adherence guide, you can create engaging video content that remains within legal and brand guidelines.

What are "Motion Buckets" in 2026 AI video tools?

Motion Buckets are numerical settings that determine the intensity of movement within a generated video. Proper adherence requires matching your descriptive language (e.g., "slowly") with a low motion bucket value to ensure the AI doesn't over-animate the scene.

Why does my AI video lose consistency after a few seconds?

This is usually due to "style drift" or a lack of "temporal anchors" in the prompt. To fix this, use models that support longer context windows like Sora 2026, and reinforce key character traits every few sentences within your prompt to keep the AI focused.

Comments ()