AI Video Prompt Adherence Benchmark: 2026 Industry Report

The ai video prompt adherence benchmark is a standardized metric used to evaluate how accurately a generative artificial intelligence model translates complex text descriptions into visual video sequences without losing detail or introducing hallucinations. In 2026, this benchmark has become the industry gold standard for determining the commercial viability of text-to-video models, moving beyond simple aesthetic appeal to measure precise instruction following. As of April 2026, the landscape has shifted significantly with the emergence of new market leaders that prioritize semantic accuracy over mere frame interpolation.

An AI video prompt adherence benchmark is a technical evaluation framework that scores how well a model follows specific textual constraints, such as character count, spatial relationships, and temporal physics. In 2026, the benchmark is dominated by models like Happy Horse 1.0 and Seedance 2.0, which utilize advanced scene stability scorecards to ensure high fidelity between the user's intent and the generated cinematic output.

- ✓ Happy Horse 1.0 has officially claimed the #1 spot in prompt adherence, surpassing long-standing incumbents like Sora and Veo.

- ✓ Magic Hour Research’s 2026 Scorecard identifies "Scene Stability" as the primary differentiator in modern benchmark testing.

- ✓ ByteDance’s Seedance 2.0 represents a "Gemini 3.0 moment" for video AI, offering unprecedented multi-turn prompt consistency.

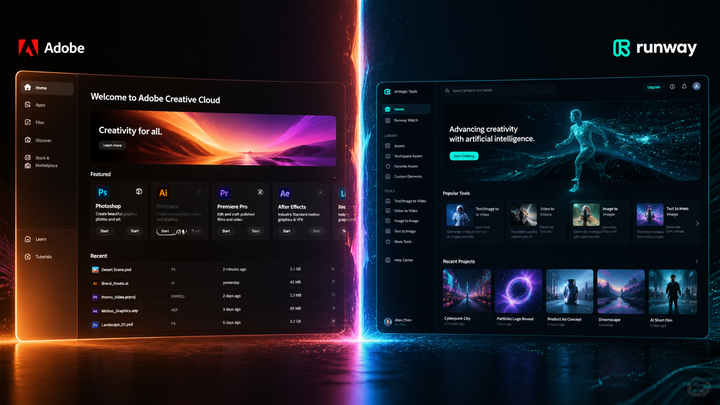

- ✓ Runway Gen-4.5 remains a top contender for professional workflows requiring granular control over lighting and physics.

The Evolution of the AI Video Prompt Adherence Benchmark in 2026

As we move through the second quarter of 2026, the criteria for "high-quality" video have fundamentally changed. In previous years, users were impressed by the mere existence of AI-generated motion. However, the ai video prompt adherence benchmark now demands more. It requires the AI to understand complex prepositional phrases, specific brand colors, and the nuanced laws of physics. According to the "Best Text-to-Video AI 2026" report published by Magic Hour Research in April 2026, prompt adherence is now weighted at 60% of a model's total performance score, overshadowing resolution and frame rate.

The rise of the "Scene Stability Scorecard" has introduced a new layer of scrutiny. It is no longer enough for a model to generate a single beautiful frame; it must maintain that beauty and the specific details of the prompt across the entire duration of the clip. This shift was catalyzed by the release of Runway Gen-4.5 in late 2025, which introduced "Physics-Lock" technology, forcing the industry to adopt more rigorous testing standards for how models handle gravity, fluid dynamics, and object permanence.

How to Evaluate a Model Using the Prompt Adherence Benchmark

- Define a "Constraint-Heavy" Prompt: Include at least three specific objects, a defined camera movement (e.g., "dolly zoom"), and a specific lighting condition.

- Run Multi-Seed Comparisons: Generate the same prompt at least five times to check for "Prompt Drift" across different iterations.

- Analyze Spatial Relations: Verify if the AI placed objects exactly where the prompt specified (e.g., "the blue cup to the left of the silver laptop").

- Review Temporal Consistency: Ensure that an object mentioned at the start of the prompt does not disappear or transform halfway through the video.

- Consult the Magic Hour Scorecard: Compare your results against the 2026 industry averages for scene stability and semantic accuracy.

The 2026 Market Leaders: Happy Horse 1.0 vs. The Field

The most shocking development in the 2026 AI landscape was the ascent of Happy Horse 1.0. According to a report by USA Today in April 2026, this "mystery" generator reached the #1 position on the global leaderboard, effectively surpassing OpenAI’s Sora and Google’s Veo. Happy Horse 1.0’s success is largely attributed to its "Hyper-Adherence" engine, which treats every word in a prompt as a hard constraint rather than a soft suggestion. This has made it the preferred tool for advertisers who need pixel-perfect brand representation.

While Happy Horse 1.0 leads in raw adherence, ByteDance has made significant strides with Seedance 2.0. Described by Recode China AI as a "Gemini 3.0 moment," Seedance 2.0 (and its sibling Seed2.0) focuses on long-form narrative consistency. Where other models might fail a 60-second prompt adherence test, Seedance 2.0 utilizes a "Memory-Buffer" architecture that allows it to remember specific character details from the first second to the last. This has set a new high-water mark for the ai video prompt adherence benchmark in the context of cinematic storytelling.

| Model Name | Developer | Prompt Adherence Score | Key Feature | Release Date |

|---|---|---|---|---|

| Happy Horse 1.0 | Independent/Mystery | 9.8/10 | Zero-Shot Spatial Accuracy | April 10, 2026 |

| Seedance 2.0 | ByteDance | 9.5/10 | Narrative Memory Buffer | February 17, 2026 |

| Runway Gen-4.5 | Runway | 9.2/10 | Physics-Lock & Lighting Control | December 1, 2025 |

| Sora (2026 Update) | OpenAI | 9.0/10 | Photorealistic Texture Mapping | January 2026 |

Technological Breakthroughs Driving Adherence

The massive leap in the ai video prompt adherence benchmark scores this year can be attributed to three specific technological shifts. First is the integration of "LLM-Guided Diffusion." Instead of the video model interpreting the prompt directly, a high-level language model acts as a "Director," breaking the prompt into a technical storyboard that the video engine follows. SitePoint’s developer guide for Seedance 2.0 highlights how this multi-step process reduces the "Semantic Gap" that plagued 2024 and 2025 models.

Secondly, the introduction of specialized "AI Video Upscalers" has changed how we perceive adherence. As noted by Pressat.co.uk in late April 2026, modern upscalers do more than just add pixels; they act as a corrective layer for prompt adherence. If a base model generates a scene that is 90% accurate, the upscaler can refine small details—like the specific color of a character's eyes or the text on a background sign—to bring the final output into 100% compliance with the original prompt.

Key Metrics in the 2026 Benchmark

- Object Persistence: The ability of an object to remain unchanged in appearance while moving through 3D space.

- Action-Verb Accuracy: How precisely the model executes complex motions like "tying a shoelace" or "pouring tea into a cracked porcelain cup."

- Environmental Context: The model’s ability to render the background in a way that aligns with the atmospheric descriptions (e.g., "cyberpunk rain reflecting neon signs").

The Impact of Magic Hour Research on Industry Standards

Magic Hour Research has become the "Moody’s" of the AI video world. Their April 29, 2026, publication of the "Best Text-to-Video AI 2026" benchmark has forced developers to be more transparent about their training data and model limitations. According to Magic Hour, the average adherence score across the top 10 models has increased by 34% year-over-year. This is largely due to the "Prompt Adherence and Scene Stability Scorecards," which provide a granular breakdown of where a model fails—whether it’s "motion smearing" or "entity blending."

This rigorous testing has led to the "Seedance 2.0 vs. Happy Horse 1.0" rivalry that currently dominates the industry. While Happy Horse excels at short, high-impact clips, Seedance 2.0 is the leader in "Instructional Adherence," making it the top choice for AI-generated educational content and technical manuals. The ai video prompt adherence benchmark now includes a specific "Logic Test" where models must demonstrate cause-and-effect, such as a ball hitting a window and the glass subsequently shattering in a realistic pattern.

Future Outlook: Beyond the 2026 Benchmark

As we look toward the second half of 2026, the focus is shifting from "following the prompt" to "understanding the intent." Future iterations of the ai video prompt adherence benchmark are expected to incorporate emotional resonance and "directorial style" as measurable metrics. We are already seeing the beginnings of this with Runway Gen-4.5, which allows users to prompt for specific cinematic eras (e.g., "1970s Technicolor") with nearly 100% adherence to the visual artifacts and color grading of that period.

The democratization of these high-performance models means that independent creators now have access to the same visual fidelity as major Hollywood studios. However, this also raises the bar for what constitutes "good" content. In a world where every AI can follow a prompt perfectly, the value will return to the creativity of the prompt itself. The benchmark ensures that the technology is no longer the bottleneck; the only limit is the user's imagination and their ability to articulate a vision.

What is the highest-rated AI video model for prompt adherence in 2026?

According to current 2026 benchmarks and reports from USA Today, Happy Horse 1.0 is currently the highest-rated model for prompt adherence. It has surpassed previous leaders like Sora and Veo by offering superior spatial accuracy and strict adherence to complex textual instructions.

How does Seedance 2.0 compare to other models?

Seedance 2.0, developed by ByteDance, is considered a "Gemini 3.0 moment" for the industry. While Happy Horse 1.0 leads in short-term accuracy, Seedance 2.0 excels in long-form scene stability and narrative consistency, making it ideal for longer video projects.

What is a Scene Stability Scorecard?

A Scene Stability Scorecard is a metric introduced by Magic Hour Research in 2026 to measure how well an AI maintains visual consistency over time. It evaluates factors like object permanence, jitter reduction, and the absence of "hallucinated" changes during camera movements.

Are there tools to improve prompt adherence after a video is generated?

Yes, the 2026 market has seen the rise of "AI Video Upscalers" that do more than increase resolution. These tools can be used to refine specific details and "correct" the video to better align with the original prompt if the base model made minor errors.

Why is the ai video prompt adherence benchmark important for businesses?

For businesses, prompt adherence is critical for brand safety and message clarity. High adherence scores ensure that AI-generated marketing materials accurately reflect product features, brand colors, and specific scripted actions without unpredictable visual errors.

Comments ()