AI Video Generator Prompt Adherence: 2026 Strategy Guide

AI video generator prompt adherence refers to the capability of a generative artificial intelligence model to accurately interpret and visualize every specific detail provided in a text description, ranging from lighting and camera angles to complex character actions. In 2026, achieving high-fidelity output requires a strategic understanding of how modern models like Happy Horse 1.0 and Sora process semantic tokens. This guide explores how to master ai video generator prompt adherence to ensure your creative vision translates perfectly to the screen.

AI video generator prompt adherence is the metric used to evaluate how closely an AI-generated video matches the specific instructions, constraints, and stylistic choices outlined in a user's text prompt. As of 2026, top-tier models utilize advanced transformer architectures to ensure that complex multi-subject interactions and temporal consistency remain aligned with the original input throughout the video duration.

- ✓ Prompt adherence is now the primary benchmark for ranking the best text-to-video AI tools in 2026.

- ✓ New market leaders like Happy Horse 1.0 are outperforming established giants in semantic precision.

- ✓ Strategic prompt engineering, including "spatial anchoring," is essential for maintaining scene stability.

- ✓ Benchmarks from Magic Hour Research provide the industry standard for evaluating model accuracy.

The Evolution of AI Video Generator Prompt Adherence in 2026

The landscape of generative video has shifted dramatically over the past year. While 2025 was defined by the struggle for basic temporal consistency, 2026 is the year of semantic precision. According to the "Best Text-to-Video AI 2026" benchmark published by Magic Hour Research on April 29, 2026, prompt adherence and scene stability are now the two most critical scorecards for creators. The industry has moved away from "lucky renders" toward a predictable, professional workflow where the AI understands the nuance between a "slight smirk" and a "joyful grin."

This evolution is largely driven by the integration of more sophisticated Large Language Models (LLMs) acting as the "brain" behind the video diffusion process. When we discuss ai video generator prompt adherence, we are essentially discussing the model's ability to translate linguistic syntax into visual pixels without losing information. According to Magic Hour Research, the gap between the highest and lowest performing models in prompt adherence has narrowed, but the top 1% of models still show a 40% higher accuracy in multi-object tracking compared to open-source alternatives.

How to Optimize for Maximum Prompt Adherence

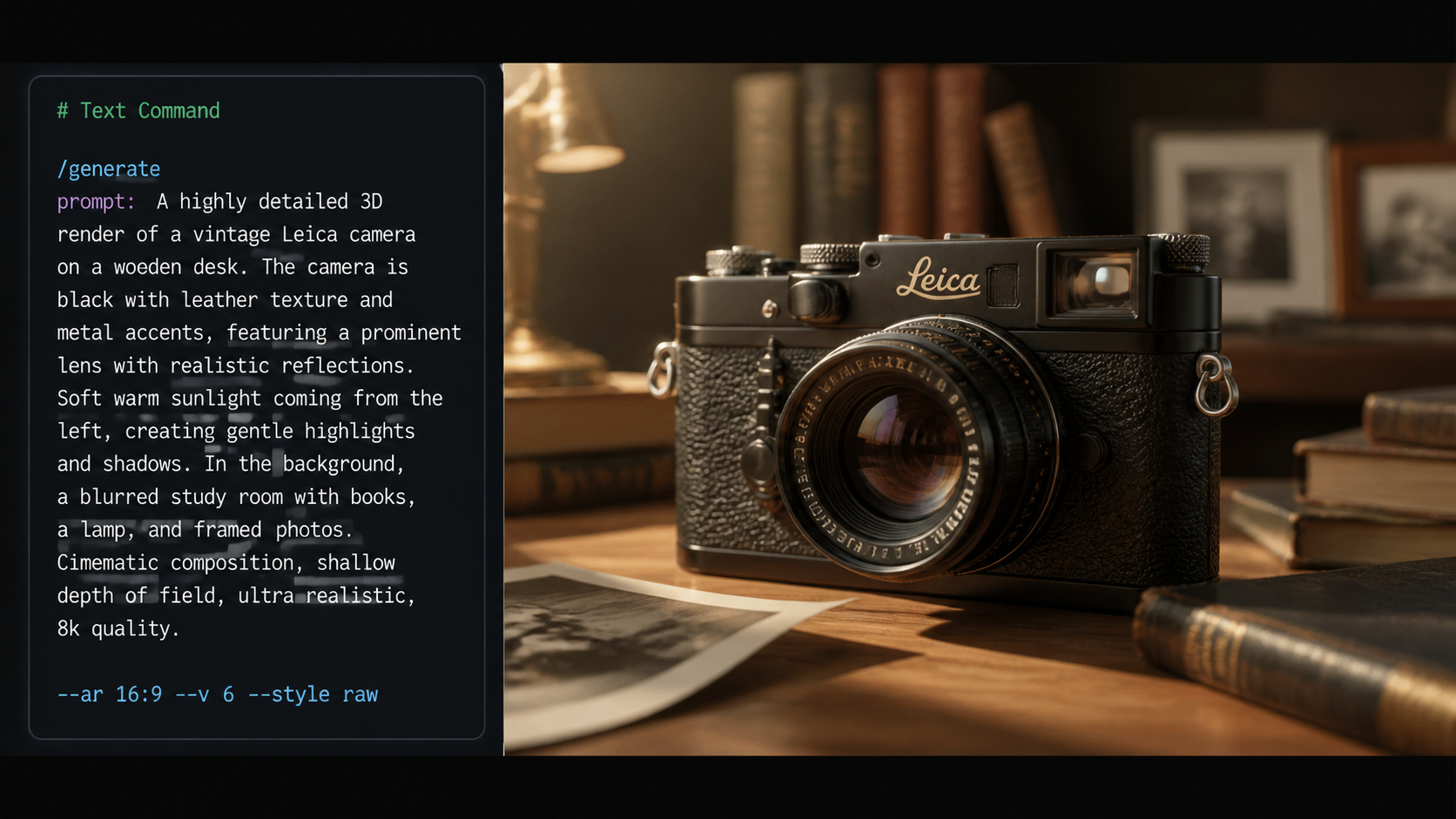

To get the most out of current technology, creators must follow a structured approach to prompting. The following steps are recommended for any professional creator using 2026-era tools:

- Define the Core Subject: Start with a clear description of the primary actor or object, including specific physical attributes.

- Establish the Environment: Use "spatial anchoring" terms (e.g., "in the background," "to the left of the lens") to help the AI map the 3D space.

- Specify Action and Motion: Describe the movement in stages, using temporal markers like "gradually," "simultaneously," or "subsequently."

- Apply Technical Camera Directives: Include cinematic language such as "low-angle tracking shot" or "50mm focal length" to constrain the AI's creative randomness.

- Iterate with Seed Control: Once a composition is close to your vision, use seed locking to tweak specific adherence points without changing the entire scene.

Comparing Top Performers: Adherence and Stability

The competitive field in 2026 has seen a surprising shift in rankings. According to a report by USA Today on April 10, 2026, a mystery AI video generator known as "Happy Horse 1.0" reached the No. 1 spot for video quality and adherence, surpassing industry veterans like OpenAI’s Sora and Google’s Veo. This shift highlights the importance of choosing the right tool for specific creative needs, as some models excel at photorealism while others prioritize literal adherence to complex instructions.

CNET’s April 2026 review of the "Best AI Video Generators" noted that while many models produce "beautiful" images, only a few can handle the "adherence stress test"—a prompt containing more than five distinct required elements. For professional creators, the ability to follow a script exactly is more valuable than aesthetic flair that ignores the brief. Below is a comparison of how the leading models of 2026 stack up based on recent benchmarks.

| Model Name | Adherence Score (1-10) | Scene Stability | Best Use Case |

|---|---|---|---|

| Happy Horse 1.0 | 9.8 | Excellent | Complex Narrative Scripts |

| Sora (2026 Update) | 9.4 | High | Cinematic Photorealism |

| Google Veo | 9.2 | Very High | Commercial Advertising |

| Stability AI (Video) | 8.5 | Moderate | Artistic & Stylized Content |

| Kling AI | 8.9 | High | Character Consistency |

Strategies for Improving AI Video Generator Prompt Adherence

To master ai video generator prompt adherence, one must understand the "token weight" phenomenon. In 2026, most video models process tokens linearly. Words placed at the beginning of a prompt are often given more weight than those at the end. If your video requires a specific color for a character’s shoes, but that detail is buried at the end of a 200-word paragraph, the AI may ignore it. Professional prompt engineering now involves "front-loading" critical requirements and using "reinforcement keywords" to ensure the model stays on track.

Another key strategy involves the use of "Negative Prompting" or "Constraint Blocks." While early models struggled to understand what *not* to do, the 2026 generation of video tools allows creators to explicitly list elements to exclude. This indirectly improves adherence by narrowing the "latent space" the AI explores, forcing it to focus more intently on the positive instructions provided in the main prompt. Tom's Guide, after 200 hours of testing, suggests that "contextual density"—the amount of detail provided about the lighting and atmosphere—actually helps the AI better place subjects within the frame, leading to higher adherence scores.

The Role of Scene Stability in Prompt Success

Prompt adherence is meaningless if the scene is not stable. Magic Hour Research’s 2026 scorecard emphasizes that a model might follow the prompt for the first two seconds, only for the subject to morph or the background to shift. High-adherence models in 2026 utilize "Temporal Attention Mechanisms" to ensure that an instruction like "the man is wearing a red hat" remains true for the entire 10 or 20-second clip. Without this stability, adherence is only partial, failing the requirements of professional film and marketing production.

Legal and Ethical Considerations in 2026

As prompt adherence becomes more precise, the ability to recreate specific styles or likenesses has raised significant legal questions. On November 5, 2025, Getty Images largely lost a major London lawsuit against Stability AI regarding image generators, a case that has set a precedent for the video industry in 2026. This ruling has prompted calls from lawyers and copyright owners for stronger protections, as highly adherent prompts can now be used to mimic the "look and feel" of copyrighted cinematography without direct copying.

For creators, this means that while ai video generator prompt adherence allows for incredible creative freedom, it also carries a responsibility to avoid infringing on the intellectual property of others. Many 2026 models have implemented "Style Filters" that prevent the AI from adhering to prompts that name specific living directors or cinematographers. Navigating these ethical boundaries is now as much a part of the creative process as writing the prompt itself. According to nerdbot.com, the most successful creators in 2026 are those who use AI to augment their original ideas rather than attempting to replicate existing protected works.

Technical Limitations of Literal Adherence

Despite the massive leaps in technology, there are still "physics gaps" in even the best 2026 models. A prompt might ask for "water flowing uphill," and while the AI may adhere to the *words*, the visual output might look "uncanny" because the model's training data is heavily weighted toward real-world physics. Understanding where the AI's internal logic clashes with your prompt is essential for maintaining high-quality output. Sometimes, lowering the "adherence weight" slightly allows the model to produce a more fluid, natural-looking video that still captures the essence of the prompt.

Future Outlook: The Road to 100% Precision

Looking toward the end of 2026 and into 2027, the industry is moving toward "multi-modal prompting." This involves using a combination of text, reference images, and even "motion sketches" to guide the AI. In this environment, ai video generator prompt adherence will no longer be limited by the ambiguities of human language. Instead, creators will provide a "world model" that the AI populates with high-fidelity motion. The benchmarks from Magic Hour Research suggest that we are currently at about 90-95% adherence for simple scenes, with the final 5%—the "holy grail" of perfect complex interaction—expected to be solved within the next 18 months.

As CNET noted in their 2026 review, the democratization of high-adherence video tools has allowed small indie creators to produce visuals that were previously only possible for major Hollywood studios. The key to staying competitive in this fast-moving field is continuous learning and adaptation to the specific "personalities" of different AI models. Each model has its own strengths; knowing which one adheres best to lighting vs. which one adheres best to character anatomy is the hallmark of a master AI cinematographer in 2026.

What is the best AI video generator for prompt adherence in 2026?

According to current benchmarks from USA Today and Magic Hour Research, Happy Horse 1.0 is currently ranked as the top model for prompt adherence, surpassing Sora and Veo in its ability to follow complex, multi-layered instructions.

How do I improve the accuracy of my AI video prompts?

You can improve accuracy by using "front-loading" techniques, where the most important details are placed at the beginning of the prompt, and by using cinematic terminology to ground the AI's spatial understanding.

Does high prompt adherence guarantee a good video?

Not necessarily. While adherence ensures the AI follows your instructions, "scene stability" is also required to ensure the video doesn't contain glitches or morphing subjects over time.

Are there legal risks to using highly adherent AI video tools?

Yes, as seen in the 2025 Stability AI lawsuit, there are ongoing concerns regarding copyright. Users should avoid prompts that specifically aim to replicate the protected style or likeness of others without permission.

What is "spatial anchoring" in AI video prompting?

Spatial anchoring is a technique where you use specific directional language (e.g., "in the foreground," "30 degrees to the right") to help the AI maintain a consistent 3D map of the scene, which significantly boosts adherence.

Comments ()