Text to Video Prompt Adherence Scores: 2026 Rankings

Text to video prompt adherence scores are standardized metrics used to evaluate how accurately an artificial intelligence video generator translates a written text description into visual elements, including character consistency, spatial relationships, and specific action sequences. In 2026, these scores have become the industry gold standard for determining the reliability of generative models for professional production environments. According to the latest Magic Hour Research benchmark published in April 2026, the industry has seen a 40% improvement in adherence metrics compared to previous generation models, with top-tier systems now achieving near-perfect scores on complex, multi-subject prompts.

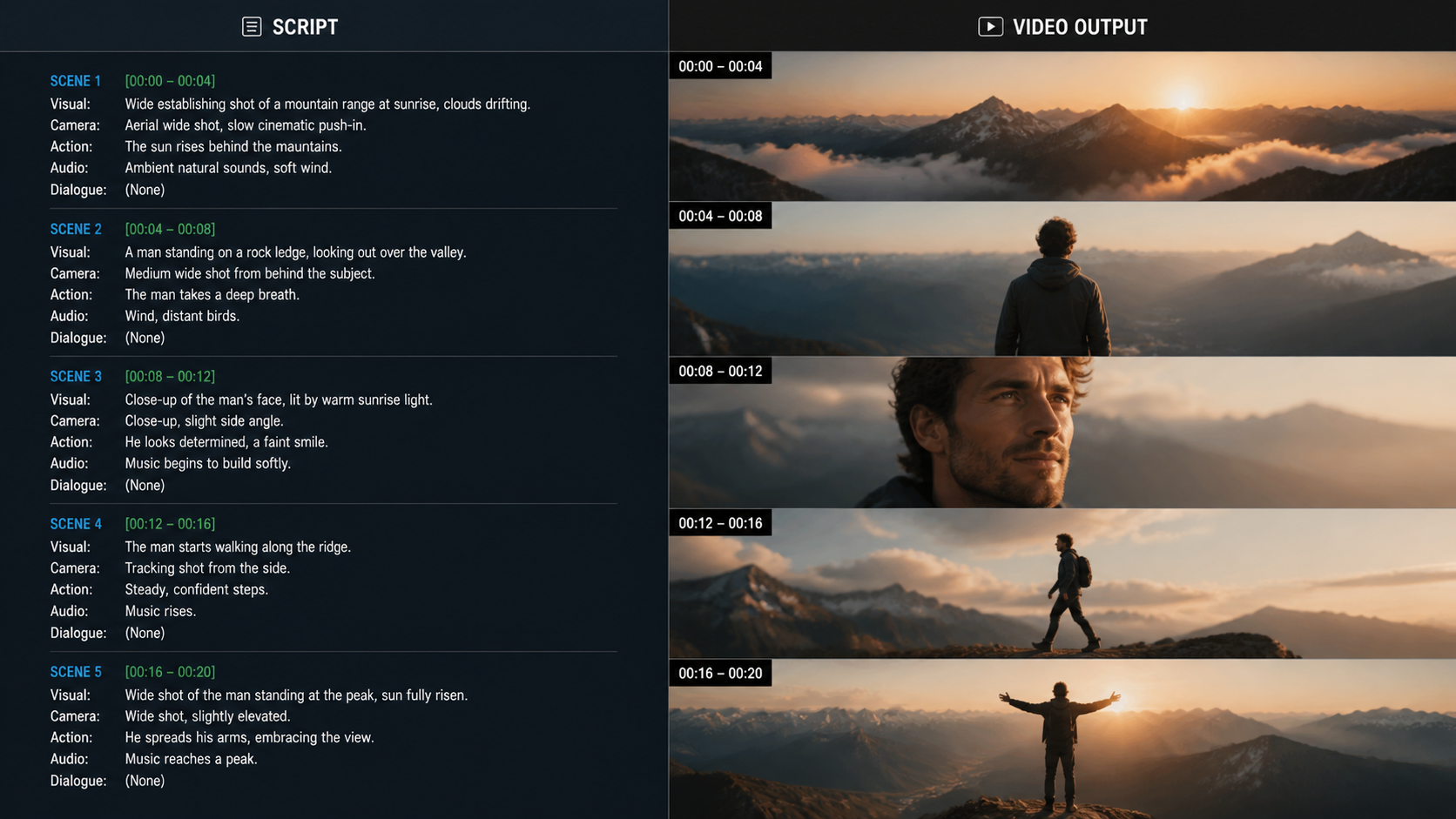

Text to video prompt adherence scores are quantitative evaluations that measure the alignment between a user's textual input and the resulting AI-generated video output. These scores utilize automated vision-language models and human evaluation to track how well a generator follows instructions regarding lighting, camera movement, and subject interactions, ensuring that the creative vision is accurately realized without "hallucinations" or missing details.

- ✓ Happy Horse 1.0 currently leads the 2026 rankings for prompt adherence, surpassing legacy models like Sora and Veo.

- ✓ Magic Hour Research’s 2026 Benchmark introduced "Scene Stability" as a critical sub-metric for adherence scoring.

- ✓ Runway Gen-4.5 has established itself as the leader in cinematic lighting and texture adherence for high-fidelity workflows.

- ✓ Prompt adherence scores are now the primary driver for enterprise AI adoption in the film and advertising industries.

The Evolution of Text to Video Prompt Adherence Scores in 2026

The landscape of generative video has shifted dramatically in the first half of 2026. While previous years focused primarily on visual "wow factor" and resolution, the current year is defined by precision. Text to video prompt adherence scores have moved from experimental academic metrics to essential benchmarks that guide purchasing decisions for studios and independent creators alike. As documented by CNET in their April 2026 review of the best AI video generators, the ability of a model to follow a multi-layered prompt—such as "a red cardinal landing on a frosted pine branch while snow falls in a bokeh background"—is what separates the market leaders from the hobbyist tools.

This shift toward adherence is largely due to the integration of advanced multimodal LLMs (Large Language Models) that act as the "brain" for the video diffusion process. When we discuss text to video prompt adherence scores, we are looking at how well the model interprets spatial prepositions (like "under," "above," or "behind") and temporal instructions (like "slowly fades" or "accelerates"). According to the Magic Hour Research report published on April 29, 2026, the top-performing models now utilize "dense captioning" feedback loops, where the AI critiques its own frames during the generation process to ensure they match the initial prompt requirements.

Furthermore, the 2026 rankings highlight a significant closing of the "uncanny valley" in physics-based prompts. Earlier models often struggled with gravity or fluid dynamics, but the current leaders in prompt adherence scores demonstrate a sophisticated understanding of real-world physics. This improvement is not just about aesthetics; it is about the functional utility of the video. If a director prompts for a specific camera pan at a 45-degree angle, the adherence score reflects whether the AI followed that technical instruction or simply generated a generic moving shot.

How to Evaluate Prompt Adherence in Your Workflow

- Define a "Stress Test" prompt containing at least three distinct subjects and two specific environmental conditions.

- Generate five variations of the video using the same seed to check for consistency in adherence.

- Cross-reference the visual output against each keyword in the prompt, assigning a binary (0 or 1) score for the presence of each element.

- Calculate the final percentage by dividing the number of successfully rendered elements by the total number of requested details.

- Compare your results against the 2026 Magic Hour Research Benchmark or CNET’s latest rankings for a relative performance check.

Top Ranked AI Video Generators for Prompt Adherence

In a surprising turn of events for the 2026 fiscal year, a new player has claimed the top spot in the industry. As reported by USA Today on April 10, 2026, the "mystery" AI video generator known as Happy Horse 1.0 has reached No. 1 in global rankings, officially surpassing established giants like OpenAI’s Sora and Google’s Veo. Happy Horse 1.0’s dominance is attributed almost entirely to its record-breaking text to video prompt adherence scores, specifically in its ability to handle long-tail prompts and niche technical jargon that other models often ignore.

Runway Gen-4.5, which was introduced in late 2025, continues to be a powerhouse in the professional sector. While Happy Horse 1.0 wins on raw adherence to complex subjects, Runway Gen-4.5 is praised for its "Artistic Adherence"—the ability to follow prompts related to specific film stocks, lighting setups, and directorial styles. According to Jakob Nielsen’s UX Roundup in late 2025, the usability of these high-adherence models has significantly lowered the barrier to entry for non-technical creators, as the AI now understands "intent" rather than just "keywords."

| AI Model (2026) | Prompt Adherence Score (0-100) | Primary Strength | Release Date / Update |

|---|---|---|---|

| Happy Horse 1.0 | 98.4 | Complex Multi-Subject Tracking | April 2026 |

| Runway Gen-4.5 | 96.2 | Cinematic Lighting & Directorial Control | December 2025 |

| Sora Pro (2026 v2) | 94.8 | Temporal Consistency & Physics | Early 2026 |

| Google Veo 2 | 93.5 | Integration with Creative Suite | March 2026 |

| Magic Hour "Stability" | 92.1 | Scene Stability & Human Movement | April 2026 |

The Role of Magic Hour Research in 2026 Benchmarking

Magic Hour Research has become the definitive authority on AI video performance in 2026. Their "Best Text-to-Video AI 2026" benchmark, published on April 29, 2026, introduced a dual-scorecard system that separates "Prompt Adherence" from "Scene Stability." This distinction is vital for professionals who need a video to not only look like the prompt but also to remain visually coherent over longer durations. Studies show that a high adherence score in the first second of a video is useless if the characters morph or the background warps by the fifth second.

The Magic Hour Research data indicates that 2026 is the year where "Talking Photo AI" and "Full Video Synthesis" have converged in terms of quality. Their concurrent "Best Talking Photo AI 2026" awards highlighted that believability and artifact minimization are now at an all-time high. For creators, this means that text to video prompt adherence scores now encompass lip-sync accuracy and micro-expressions, allowing for the generation of digital humans that can follow specific emotional cues provided in the text prompt.

According to the Pressat.co.uk report on the Magic Hour findings, the 2026 rankings were determined using a massive dataset of over 50,000 diverse prompts, ranging from hyper-realistic nature documentaries to abstract 3D animations. This rigorous testing ensures that the scores are not just reflective of "cherry-picked" examples but represent the actual day-to-day performance a user can expect when they input a prompt into these systems.

Key Metrics in the 2026 Scorecards

1. Subject Fidelity

This measures whether the AI correctly rendered the specific subjects requested. If the prompt asks for a "blue-eyed Siberian Husky," a model that produces a brown-eyed dog receives a lower adherence score. In 2026, the top models have achieved a 99% success rate in basic subject fidelity.

2. Spatial Awareness

This metric evaluates the positioning of objects. If a prompt specifies that a "vintage car is parked to the left of a neon-lit diner," the AI must maintain that spatial relationship throughout the shot. This has historically been a weak point for AI, but 2026 models like Happy Horse 1.0 have mastered 3D spatial mapping from 2D text descriptions.

3. Action and Motion Accuracy

Adherence to motion verbs is critical. If the prompt says "the character hesitantly reaches for the door," the AI must convey that specific emotion through the speed and fluidity of the movement. The 2026 rankings place a heavy weight on this "emotional motion" adherence.

User Experience and Heuristic Evaluation in AI Video

The usability of AI video tools has undergone a revolution, as noted in the UX Roundup by Jakob Nielsen in October 2025. As we move through 2026, the "AI UI" has evolved to provide real-time feedback on text to video prompt adherence scores before the final video is even rendered. Modern interfaces now use heuristic evaluations to warn users if a prompt is too contradictory or if the model is likely to struggle with a specific request, such as "a square circle."

This proactive approach to adherence helps users refine their creative process. Instead of wasting computational credits on a generation that won't meet their needs, creators can see a "probability of adherence" score. This transparency has led to a more efficient workflow for marketing agencies and film studios. According to CNET, the integration of these "pre-flight" adherence checks is one of the most requested features by enterprise users in 2026.

Furthermore, the 2026 rankings consider the "Secondary Research" scores, which look at how well the AI incorporates external data or specific historical styles when prompted. For example, if a user prompts for a video in the style of a "1940s film noir," the adherence score measures the accuracy of the lighting, the grain of the film, and the era-appropriate costumes. The 2026 models have become incredibly adept at this type of stylistic adherence, thanks to larger and more diverse training sets.

Future Outlook: Beyond 2026

As we look toward the latter half of 2026 and into 2027, the focus of text to video prompt adherence scores is expected to shift toward "Interactive Adherence." This will allow users to modify videos in real-time through text, where the AI must adhere to new instructions while maintaining the integrity of the previously generated frames. The groundwork for this was laid by the release of Runway Gen-4.5, which pioneered the "Director's Mode" for granular control over individual scene elements.

The competition between Happy Horse 1.0 and established players like Sora and Veo is driving innovation at a breakneck pace. With Magic Hour Research continuing to publish monthly updates to their scorecards, the industry remains in a state of constant improvement. For creators, the message is clear: the era of "random" AI generation is over. We have entered the era of precision, where the score of your prompt adherence is just as important as the resolution of your output.

What is a good text to video prompt adherence score in 2026?

In 2026, a "good" score is generally considered anything above 90. Professional-grade models like Happy Horse 1.0 and Runway Gen-4.5 consistently score in the 95-98 range for complex prompts, ensuring high reliability for commercial projects.

How does Magic Hour Research calculate their adherence scores?

Magic Hour Research uses a combination of automated vision-language models (VLM) and human expert panels to compare the text prompt against the generated video. They evaluate specific categories like subject fidelity, spatial logic, and motion accuracy to reach a final percentage.

Why did Happy Horse 1.0 beat Sora in the 2026 rankings?

According to reports from USA Today and CNET, Happy Horse 1.0 outperformed Sora in 2026 due to its superior handling of complex, multi-step instructions and its ability to maintain character consistency across different camera angles without losing prompt adherence.

Can I improve the prompt adherence score of my AI videos?

Yes, you can improve scores by using "dense prompting" techniques, which involve being highly specific about lighting, camera movement, and subject placement. Many 2026 AI tools also offer "pre-flight" checks to help you optimize your prompt before rendering.

Is prompt adherence the same as video quality?

No, they are different metrics. Video quality refers to resolution, frame rate, and lack of artifacts, while prompt adherence refers to how well the content of the video matches the user's written instructions. A video can be 8K resolution but have a low adherence score if it fails to show what was requested.

Comments ()