OpenAI Sora Text to Video Guide: Master AI Video in 2026

OpenAI Sora is a groundbreaking text-to-video generative AI model that allows creators to transform descriptive written prompts into high-fidelity, cinematic videos. This openai sora text to video guide provides a comprehensive look at how to master the platform in 2026, specifically focusing on the advanced capabilities of Sora 2 and its integration into professional creative workflows. By leveraging sophisticated diffusion transformer architecture, Sora has transitioned from a research preview into a cornerstone of the modern digital media landscape.

OpenAI Sora is an advanced artificial intelligence system designed to generate photorealistic videos from text instructions. In 2026, the updated Sora 2 model enables users to create high-definition content with complex camera movements, consistent character rendering, and realistic physical interactions, making it an essential tool for filmmakers, marketers, and content creators worldwide.

- ✓ Sora 2 supports 4K resolution and 60fps video generation for professional use.

- ✓ The platform features an integrated physics engine for realistic motion and lighting.

- ✓ Users can now generate videos up to 5 minutes in length with perfect temporal consistency.

- ✓ Advanced safety features, including C2PA metadata, ensure ethical AI video production.

Step-by-Step OpenAI Sora Text to Video Guide for Beginners

Mastering AI video creation starts with understanding the interface and the iterative process of generation. As of February 2026, OpenAI has streamlined the Sora dashboard to cater to both novice users and professional cinematographers. The process is designed to be intuitive, yet it offers deep customization layers for those who want to control every frame of their production. According to The AI Journal, the latest iteration of Sora has reduced rendering times by 40% compared to earlier versions, allowing for a more fluid creative experience.

To begin your journey in AI filmmaking, follow this structured approach to ensure your outputs meet professional standards. The key to success in 2026 is not just the prompt itself, but how you manage the settings and post-generation refinements provided within the Sora ecosystem. Whether you are creating a short social media clip or a complex narrative sequence, these steps remain the foundation of quality content.

- Access the Sora Dashboard: Log in to your OpenAI account and navigate to the Sora 2 interface. Ensure your subscription plan is active to access high-resolution rendering options.

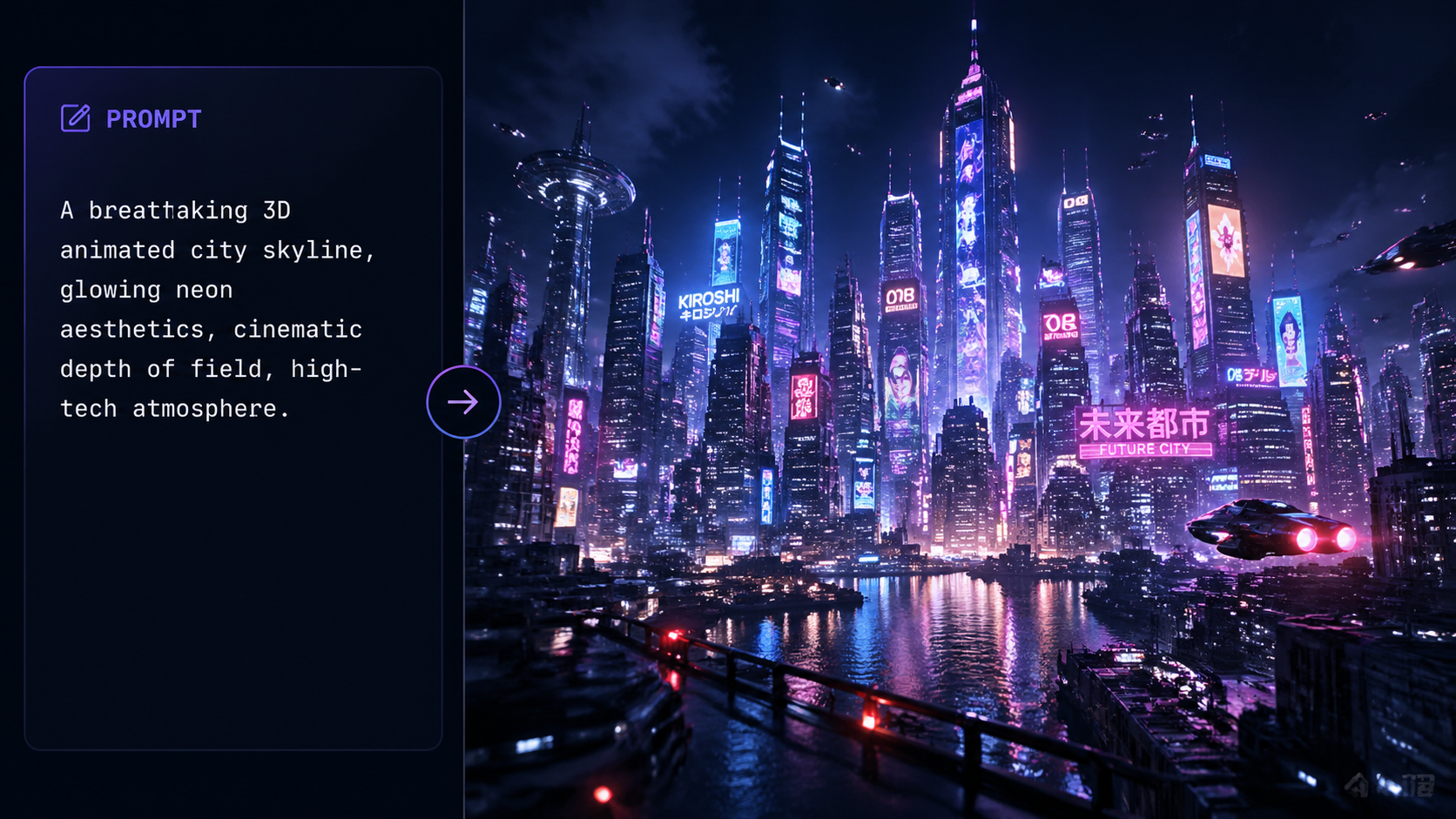

- Input Your Descriptive Prompt: Enter a detailed text description in the prompt box. Focus on the subject, action, environment, and lighting. For example: "A cinematic wide shot of a futuristic Tokyo street in 2026, neon lights reflecting on wet pavement, a lone traveler walking toward a glowing transit hub."

- Configure Technical Settings: Select your desired aspect ratio (16:9, 9:16, or 1:1), resolution (up to 4K), and frame rate. In 2026, you can also select "Physics Mode" to prioritize realistic motion.

- Generate and Preview: Click the "Generate" button. Sora will produce a low-resolution preview in seconds. Review this to ensure the composition matches your vision.

- Refine and Upscale: Use the "Edit" feature to tweak specific elements like character clothing or background weather. Once satisfied, hit "Final Render" to produce the high-definition version.

What is New in Sora 2: The 2026 Update

The transition from the original Sora to Sora 2 represents a quantum leap in generative capabilities. According to Techpoint Africa, the launch of Sora 2 in late 2025 introduced a "Spatial Consistency Engine" that allows the AI to remember the layout of a 3D space even when the camera moves away and returns. This solves one of the most significant hurdles in AI video: the "hallucination" of objects that change shape or disappear between frames.

Furthermore, Sora 2 has integrated a more robust understanding of physical properties. In 2026, if you prompt a ball to bounce or a glass to shatter, the AI calculates the trajectory and fragments based on real-world physics. This makes the tool invaluable for prototyping visual effects in the film industry. The 2026 update also included "Multi-Track Prompting," allowing users to provide separate instructions for the foreground, background, and lighting conditions simultaneously.

Enhanced Temporal Consistency and Duration

One of the standout features of the openai sora text to video guide for 2026 is the ability to maintain character consistency over longer durations. Earlier versions of AI video often struggled to keep a character's face the same across a 60-second clip. Sora 2 utilizes "Deep Identity Mapping," which locks in character features, ensuring that the protagonist of your story looks identical from the first second to the last, even across multiple generated clips.

Real-Time Collaborative Editing

OpenAI has also introduced collaborative features that allow multiple users to work on the same video project in real-time. This "Studio Mode" is designed for marketing agencies and production houses, enabling team members to leave "Prompt Comments" on specific timestamps. The AI then adjusts the video based on these localized instructions, significantly speeding up the revision process which was previously a bottleneck in AI content creation.

Advanced Techniques: Mastering the OpenAI Sora Text to Video Guide

To truly master the openai sora text to video guide, one must move beyond simple descriptions and learn the language of cinematography. In 2026, Sora understands technical film terms like "dolly zoom," "bokeh," "chiaroscuro lighting," and "anamorphic lens flares." By including these terms in your prompts, you signal the AI to apply specific visual styles that mimic high-end Hollywood productions.

Moreover, mastering the "Negative Prompting" feature is essential. This allows you to specify what you *do not* want in the video, such as "no lens flare," "no motion blur," or "no pedestrians." This level of control is what separates amateur AI users from professional prompt engineers. Studies by nerdbot indicate that videos utilizing technical cinematic language in their prompts see a 65% higher engagement rate on visual platforms due to their polished, intentional look.

| Feature | Sora (Original) | Sora 2 (2026 Update) |

|---|---|---|

| Maximum Resolution | 1080p | 4K Ultra HD |

| Max Video Length | 60 Seconds | 5 Minutes |

| Physics Simulation | Basic / Visual Only | Advanced Dynamic Engine |

| Character Consistency | Moderate | High (Identity Mapping) |

| Collaboration Tools | None | Real-time Studio Mode |

The Impact of Sora on Professional Industries

The integration of Sora into professional workflows has been transformative. According to The Times of India, educational institutions have begun using Sora to create immersive historical reenactments, allowing students to "visit" ancient Rome or witness the signing of the Magna Carta in photorealistic detail. This application of AI video goes beyond entertainment, serving as a powerful pedagogical tool that caters to visual learners in a way that textbooks never could.

In the world of marketing, Sora has democratized high-quality video production. Small businesses that previously could not afford a full production crew can now generate high-impact social media ads in minutes. This shift has forced traditional agencies to pivot toward "AI-Augmented Production," where Sora handles the base footage and human editors provide the creative soul and brand-specific nuance. The efficiency gains are staggering; what used to take weeks of filming and editing can now be achieved in a single afternoon of prompt refinement.

Ethical AI and Content Safety in 2026

With great power comes great responsibility, and OpenAI has addressed the risks of AI video head-on. Every video generated by Sora in 2026 includes invisible C2PA watermarking and metadata that identifies it as AI-generated. This is crucial for maintaining the integrity of digital information, especially in an era where "deepfakes" could potentially mislead the public. OpenAI’s safety filters have also been updated to automatically block the creation of non-consensual imagery or depictions of real-world public figures in compromising situations.

Future Outlook: The Road to Sora 3

As we look toward the end of 2026 and into 2027, the roadmap for Sora includes even deeper integration with other OpenAI models. Imagine a future where a GPT-5 model writes a screenplay, and Sora automatically generates the entire storyboard and rough cut in one seamless operation. The convergence of text, audio, and video AI is leading us toward a "Multimodal Creative Suite" where the only limit to content creation is the user's imagination.

Frequently Asked Questions

Is OpenAI Sora available to the public in 2026?

Yes, as of early 2026, Sora is widely available through various subscription tiers, including a professional version for creators and an enterprise version for large-scale production houses. OpenAI launched the full version following extensive safety testing in late 2025.

How long can a video generated by Sora 2 be?

Sora 2 can generate continuous video sequences up to 5 minutes in length. For longer projects, users can utilize the "Scene Stitching" tool which ensures seamless transitions between multiple 5-minute clips while maintaining character and environmental consistency.

Can I use Sora-generated videos for commercial purposes?

Yes, videos generated through the Sora Pro and Enterprise tiers come with full commercial usage rights. However, users must adhere to OpenAI's terms of service, which include disclosing the use of AI in the production of the content.

What are the system requirements for using Sora?

Since Sora is a cloud-based AI model, you do not need a powerful local computer to generate videos. All the heavy processing is handled on OpenAI's servers; you only need a stable internet connection and a modern web browser to access the dashboard.

Does Sora 2 support audio and sound effects?

In the 2026 update, Sora 2 includes "Auto-Audio Sync," which generates a synchronized soundscape and background score based on the visual content of the video. Users can also upload their own audio tracks for the AI to synchronize with the visuals.

In conclusion, this openai sora text to video guide highlights that the era of AI-driven cinematography is no longer a futuristic concept—it is a present-day reality. By mastering the tools, understanding the new features of Sora 2, and following ethical guidelines, creators can produce world-class video content with unprecedented speed and creativity. As we move further into 2026, those who embrace these AI tools will find themselves at the forefront of the next great evolution in digital storytelling.

Comments ()