How to Use OpenAI Sora: 2026 Complete Guide to Video AI

To learn how to use OpenAI Sora in 2026, you must navigate the finalized interface within the ChatGPT ecosystem or the standalone Sora Pro dashboard. The process involves entering a descriptive text prompt, selecting specific cinematic parameters such as aspect ratio and motion intensity, and generating high-fidelity video clips up to 60 seconds in length. While OpenAI has recently pivoted its video strategy, the core functionality remains accessible to legacy enterprise users and creative partners through the integrated Creative Cloud plugins.

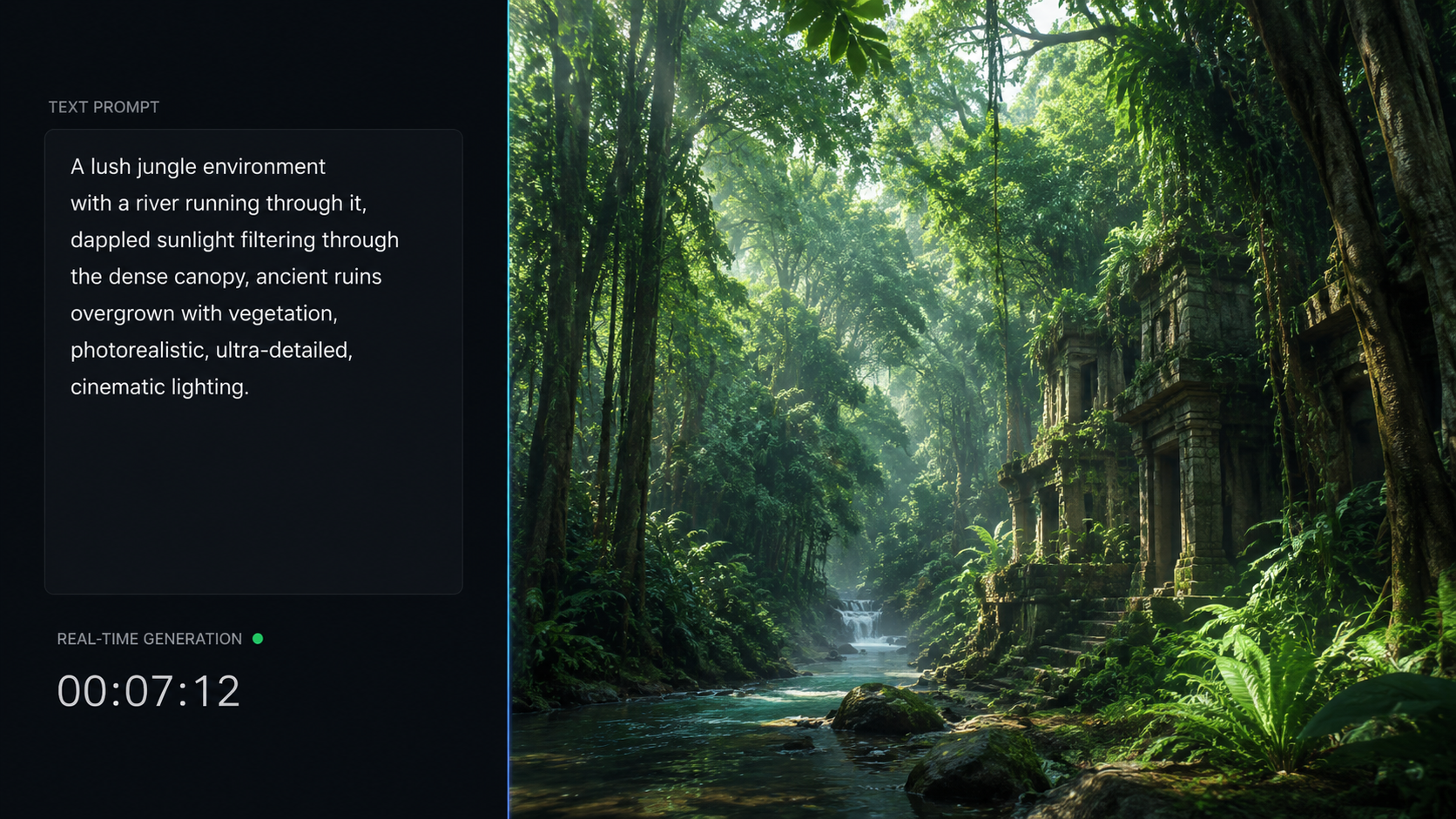

OpenAI Sora is a text-to-video generative model capable of creating realistic and imaginative scenes from natural language instructions. By leveraging a diffusion transformer architecture, it processes spatial and temporal data to generate consistent video content. Users can access Sora by inputting detailed prompts, adjusting camera settings, and utilizing "motion brushes" to guide specific object movements within the frame.

- ✓ Master the "Temporal Prompting" technique to ensure character consistency across multiple 60-second clips.

- ✓ Utilize the 2026 "Director’s Mode" to control camera pans, tilts, and zooms with granular precision.

- ✓ Understand the current lifecycle of the tool following OpenAI’s strategic shift toward the "ChatGPT Images 2" architecture.

- ✓ Implement safety protocols and C2PA metadata standards required for all AI-generated video exports.

Step-by-Step Guide: How to Use OpenAI Sora for Professional Video

Navigating the Sora interface requires a blend of creative writing and technical understanding. In early 2026, OpenAI streamlined the user experience to make video generation as intuitive as image generation. The current workflow emphasizes "iterative refinement," allowing users to tweak specific segments of a video without regenerating the entire clip from scratch. This saves both compute credits and time for professional editors.

Before starting, ensure your account has the necessary "Video Compute Units" (VCUs). According to recent reports from understandingai.org, OpenAI transitioned to a credit-based system in March 2026 to manage the high server costs associated with Sora's diffusion process. Once your credits are active, follow these steps to generate your first cinematic masterpiece.

- Access the Sora Dashboard: Log into your OpenAI account and select the "Sora" tab from the sidebar, or open the Sora plugin in your preferred NLE (Non-Linear Editor).

- Enter Your Base Prompt: Type a detailed description of the scene. Include the subject, setting, lighting conditions, and specific camera movements (e.g., "A cinematic drone shot of a neon-lit Tokyo street in the rain").

- Configure Technical Settings: Choose your aspect ratio (16:9, 9:16, or 1:1) and resolution. In 2026, Sora supports up to 4K resolution for Pro-tier users.

- Apply Motion Sensitivity: Use the slider to determine how much movement occurs in the scene. A lower setting is ideal for "talking head" style clips, while a higher setting is better for action sequences.

- Generate and Refine: Click "Generate." Once the initial 10-second preview is ready, use the "Extend" feature to build the video out to its full 60-second duration.

Optimizing Your Prompts: How to Use OpenAI Sora Effectively

The secret to high-quality video lies in the prompt structure. In 2026, the Sora model has been trained on a much wider array of "physics-compliant" data, meaning it understands how gravity and light interact better than previous iterations. To get the most out of the tool, you should use a "Layered Prompting" approach. This involves describing the background first, then the midground actors, and finally the stylistic overlays like film grain or color grading.

The Physics of Prompting

When you are learning how to use OpenAI Sora, you must account for temporal consistency. For example, if you describe a person walking, you should specify their gait and the surface they are walking on. According to technical documentation from OpenAI released in February 2026, the model now utilizes "Spatial-Temporal Patches," which means it views the video as a 3D block of data rather than a series of flat frames. This allows for much more realistic movement of hair, fabric, and water.

Using Negative Prompts and Constraints

Just as important as what you want is what you don't want. Use the "Constraints" box to prevent common AI artifacts like "floating limbs" or "morphing backgrounds." By 2026, the negative prompting field has become a standard feature for professional workflows, ensuring that the AI adheres to the laws of physics and maintains a consistent art style throughout the generation process.

Comparing Sora Versions: 2025 vs. 2026 Features

The evolution of Sora has been rapid. While the initial release focused on short, often surreal clips, the 2026 version (often referred to as Sora 1.5 or Sora Pro) introduced significant upgrades in duration and resolution. However, as noted by CNET in April 2026, OpenAI has begun integrating these features into a broader "ChatGPT Images 2" model, which combines static and moving image generation into a single unified engine.

| Feature | Sora (Late 2025) | Sora / ChatGPT Images 2 (2026) |

|---|---|---|

| Max Video Length | 15 Seconds | 60 Seconds |

| Resolution | 1080p Max | Up to 4K UHD |

| Frame Rate | 24 fps | Up to 60 fps |

| Physics Engine | Experimental | Advanced / Gravity-Aware |

| Audio Generation | None (Silent) | Integrated Foley & Ambient Sound |

The Future of Video AI: Why OpenAI is Shifting Strategy

Despite the technical brilliance of the platform, the landscape for Sora changed significantly in the spring of 2026. According to Tech Policy Press, the "One-Stop AI Slop Shop" era of Sora faced intense scrutiny from policymakers regarding copyright and deepfakes. This led OpenAI to announce a "sunsetting" of the standalone Sora app in March 2026, choosing instead to fold the technology into more secure, enterprise-focused tools.

For those wondering how to use OpenAI Sora during this transition, the focus has shifted toward "Safe-Generation" environments. This means that while the raw power of Sora is still available, it is now wrapped in layers of digital watermarking and content provenance tools. As The Ankler reported in April 2026, Hollywood studios were initially wary of the tool, but have since adopted the underlying architecture for pre-visualization and "digital scouting" rather than final-frame replacement.

Integration with Creative Suites

Rather than a standalone web portal, most professionals now access Sora's capabilities through API integrations. This allows for a "hybrid workflow" where the AI generates the base footage, which is then immediately editable in software like Adobe Premiere or DaVinci Resolve. This move was a direct response to feedback from creators who, like the writer for Business Insider in late 2025, found the standalone experience "wild" but eventually "boring" without deeper editing controls.

The Rise of ChatGPT Images 2

The most significant development in 2026 is the birth of "ChatGPT Images 2." As CNET detailed, OpenAI built this new model after realizing that users wanted a seamless transition between still images and video. This new model allows you to take any generated image and "animate" it using Sora’s temporal logic, effectively making every image a potential 60-second movie scene.

Safety and Ethical Use of Video AI

As you learn how to use OpenAI Sora, you must remain compliant with the latest AI safety standards. In 2026, every video generated by Sora contains an invisible C2PA watermark that identifies the content as AI-generated. Attempting to strip this metadata can lead to account suspension, as OpenAI has doubled down on its commitment to "Responsible Media."

Furthermore, the 2026 update introduced "Biometric Blocking," which prevents the generation of any video containing the likeness of a real person without their explicit, cryptographically signed permission. This was a necessary step to combat the rise of non-consensual deepfakes that plagued the early days of generative video. Users are encouraged to use the "Character Creator" tool to design unique, non-real actors for their projects.

Frequently Asked Questions about Using Sora

Is OpenAI Sora still available for public use in 2026?

While the standalone Sora app was shut down in March 2026, the technology remains accessible through the "ChatGPT Images 2" update and professional API integrations for enterprise users. Legacy users with existing subscriptions still have access to the core video generation features.

How long does it take to generate a 60-second video?

Due to the massive compute requirements, a full 60-second 4K video typically takes between 10 and 20 minutes to render. However, "Preview Mode" allows you to see a low-resolution version in under 2 minutes to ensure the prompt is working as intended.

Can I upload my own images for Sora to animate?

Yes, the 2026 version of the tool allows for "Image-to-Video" workflows. You can upload any high-resolution image, and Sora will use it as the first frame, extending the scene based on your text instructions.

What are the system requirements for using Sora?

Since the processing happens on OpenAI’s servers, you do not need a powerful GPU. You only need a stable internet connection and a browser or application that supports the OpenAI API or ChatGPT interface.

Does Sora generate sound for the videos?

As of the early 2026 updates, Sora now includes an integrated audio engine that generates synchronized ambient sounds and basic Foley effects, though complex dialogue still requires secondary AI voice tools.

Understanding how to use OpenAI Sora in 2026 is about more than just typing prompts; it is about mastering the collaboration between human creativity and machine physics. While the platform has evolved from a standalone "novelty" into a specialized professional tool, its power to transform text into cinematic reality remains the gold standard for the industry. By following the structured workflow and staying within the ethical guidelines, creators can leverage Sora to produce content that was previously impossible without a multi-million dollar studio budget.

Comments ()