Adobe Firefly AI Video Creation: 2026 Guide to Tools

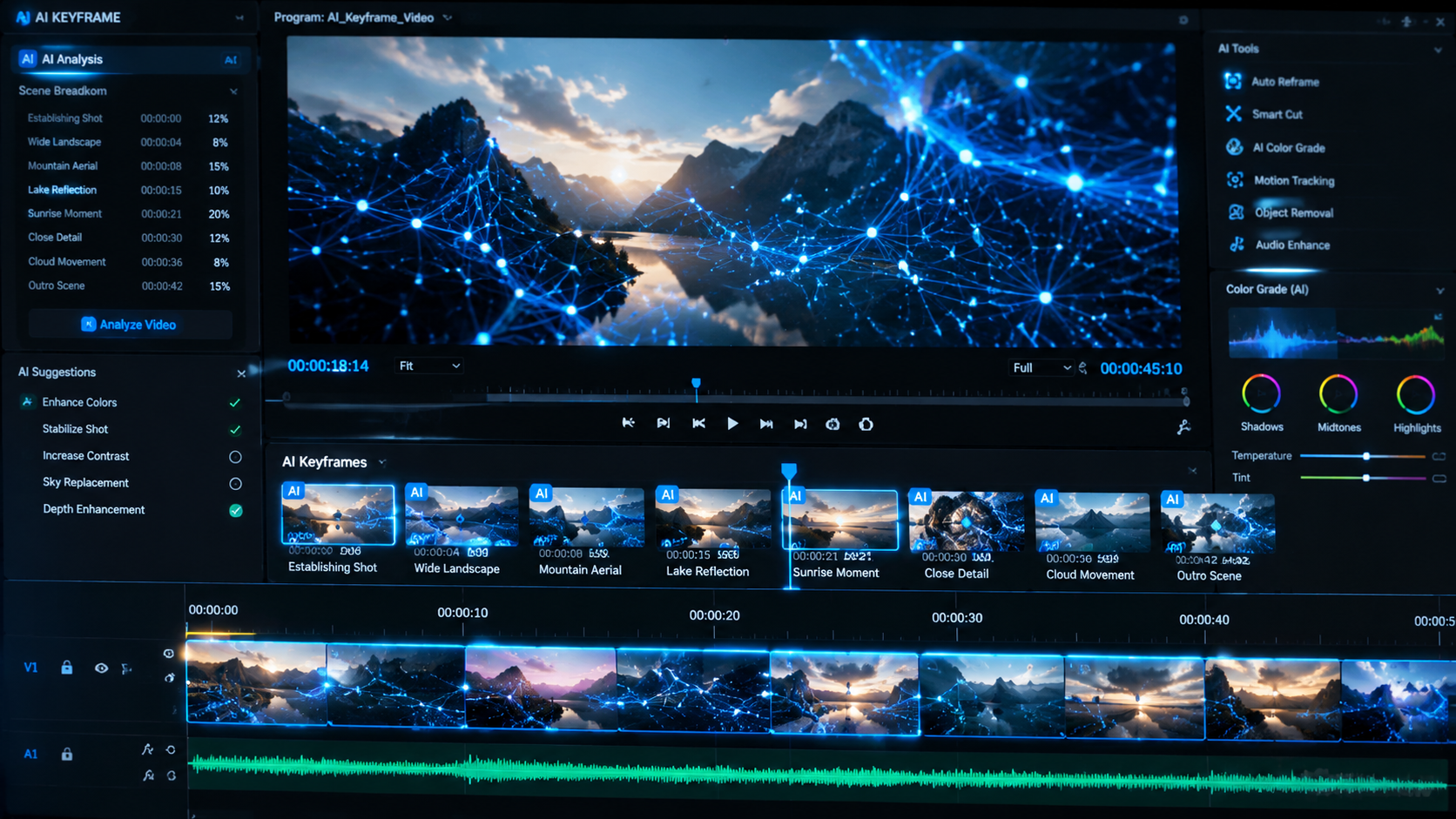

Adobe Firefly AI video creation refers to the suite of generative artificial intelligence tools within the Adobe ecosystem designed to automate and enhance video production through text-to-video, image-to-video, and advanced generative editing. As of 2026, Adobe has integrated these models directly into Premiere Pro and the Firefly web application, allowing creators to generate high-fidelity B-roll, extend clips, and automate color grading using natural language prompts. This evolution marks a shift from manual frame manipulation to a prompt-based creative workflow powered by the latest Firefly Video Models.

Adobe Firefly AI video creation is a generative AI technology that allows users to produce high-quality cinematic footage and edit existing video assets using text or image prompts. By 2026, it has become a cornerstone of professional workflows, offering features like Generative Extend, automated translation, and "Creative Agents" that assist in the end-to-end production process within Adobe Premiere Pro.

- ✓ Firefly Video Models now support unlimited generations for premium subscribers as of late 2025.

- ✓ New "Creative Agents" act as AI production assistants to streamline complex editing tasks.

- ✓ Integrated generative features in Premiere Pro allow for seamless clip extension and object removal.

- ✓ Advanced AI translation and lip-syncing tools are now standard for global content distribution.

- ✓ All Firefly-generated content includes Content Credentials for ethical AI transparency.

The Evolution of Adobe Firefly AI Video Creation in 2026

The landscape of digital storytelling has been fundamentally reshaped by the advancements in Adobe Firefly AI video creation. Since the pivotal updates in late 2025 and the major releases in April 2026, the toolset has moved beyond simple experimentation into the realm of high-end professional production. Adobe has successfully bridged the gap between generative AI and the traditional timeline-based editing environment of Premiere Pro, creating a hybrid workflow that respects the precision required by editors while harnessing the speed of AI.

According to reports from Business Wire in April 2026, Adobe ushered in this new era by introducing "Creative Agents," which are specialized AI entities capable of understanding creative intent. These agents don't just generate a single clip; they understand the context of a project, suggesting color palettes, transition styles, and even assisting with the assembly of rough cuts. This integration ensures that Adobe Firefly AI video creation remains a collaborative tool rather than a replacement for human creativity, focusing on removing the "drudgery" of technical tasks.

Furthermore, the scalability of these tools has reached a new milestone. As noted by Forbes, Adobe's decision to offer unlimited generations for specific models has democratized high-quality video production. This move was a response to the growing demand for short-form content and personalized marketing videos, where the volume of required assets often outpaces the capacity of traditional production teams. By 2026, the Firefly Video Model has been optimized for speed, allowing for near-instant previews of complex generative effects.

Step-by-Step: How to Use Adobe Firefly AI Video Creation

- Access the Firefly Video Portal: Log into the Adobe Firefly web interface or open the latest version of Adobe Premiere Pro (2026 edition).

- Select Your Generation Mode: Choose between "Text-to-Video" for creating footage from scratch or "Generative Extend" to add frames to an existing clip.

- Input Your Prompt: Describe the scene, including lighting (e.g., "cinematic golden hour"), camera movement (e.g., "slow drone pan"), and subject matter.

- Upload Reference Assets: Optionally, upload a still image to guide the visual style or a "Structure Reference" to dictate the composition of the video.

- Refine with Creative Agents: Use the AI agent to suggest variations in color grading or to automatically match the generated clip to your existing timeline's aesthetic.

- Export with Content Credentials: Finalize your render, ensuring the "Content Credentials" metadata is attached to verify the use of generative AI in the production process.

New Tools and Models Powering the 2026 Workflow

The core of the current ecosystem is the refined Firefly Video Model, which has seen significant upgrades in temporal consistency—the ability of the AI to maintain the appearance of objects and characters across multiple frames. In previous iterations, "morphing" or "hallucinations" were common; however, the 2026 updates have virtually eliminated these issues for standard cinematic shots. This precision is what makes Adobe Firefly AI video creation a viable choice for commercial-grade output.

One of the most praised features released in May 2026 is the advanced AI Translation and Generative Dubbing tool. As reported by United News of India, this feature allows creators to translate the dialogue in a video into dozens of languages while simultaneously adjusting the speaker's lip movements to match the new audio. This level of localization was previously only possible with massive budgets and weeks of post-production, but it is now integrated directly into the Firefly suite.

Key Features Comparison: Firefly Video Evolution

| Feature | 2025 Version (Early) | 2026 Current Version |

|---|---|---|

| Max Resolution | 1080p Upscaled | Native 4K Generative Output |

| Generation Limits | Credit-based system | Unlimited Generations (Pro Plans) |

| Translation | Audio only | Full Lip-Sync & Visual Translation |

| Integration | Web-based beta | Deep Premiere Pro & After Effects Integration |

| Creative Assistance | Manual Prompts | AI Creative Agents & Context-Aware Suggestions |

Reinventing Color and Style with Adobe Firefly AI Video Creation

Color grading has traditionally been one of the most time-consuming aspects of video editing, requiring a specialized eye and hours of fine-tuning. In April 2026, Adobe announced a complete reinvention of color workflows for editors. By leveraging Firefly’s generative capabilities, editors can now apply complex "Color Grades" simply by describing a mood or uploading a reference photo. The AI analyzes the luminosity and chrominance of the source footage and applies a non-destructive grade that matches the target style perfectly.

According to Adobe’s official April 2026 press release, this "reinvented color" system isn't just a filter; it’s a generative process that understands the 3D depth of a scene. It can differentiate between the foreground subject and the background, allowing for selective color adjustments that would typically require complex masking and rotoscoping. This specific application of Adobe Firefly AI video creation tools has reduced the post-production timeline for independent filmmakers by an estimated 40%.

The "Putting Ideas in Motion" initiative, launched in February 2026, also introduced "Style Kits." These are pre-trained generative profiles that allow brands to maintain visual consistency across all AI-generated video content. If a company has a specific "look" (e.g., high-contrast, noir, or vibrant 3D animation), the Firefly model can be constrained to only produce video assets that fit within those brand guidelines, ensuring that the use of AI doesn't dilute the brand's identity.

Generative Extend and the End of "Short Footage"

The "Generative Extend" tool remains one of the most practical applications of Adobe Firefly AI video creation. Every editor has faced the problem of a clip being two seconds too short for a transition or a music beat. With the 2026 updates, Firefly can now extend clips by up to five seconds per generation, perfectly mimicking the camera movement and the micro-expressions of subjects within the frame. This tool has become a "must-have" for social media creators who need to sync visuals to trending audio tracks with frame-perfect accuracy.

Studies and industry feedback highlighted by Forbes indicate that the "Unlimited Generations" model introduced in late 2025 was the catalyst for the widespread adoption of Generative Extend. Editors are no longer afraid to experiment with multiple versions of an extension to see which one fits the narrative flow best. This freedom to iterate without the fear of exhausting credits has led to a more creative and less stressful editing environment.

Advanced Capabilities of Generative Extend

- Ambient Noise Generation: The tool now generates synchronized ambient background audio to match the extended visual frames.

- Motion Smoothing: AI-driven frame interpolation ensures that extended clips do not suffer from jitter or "ghosting" effects.

- Object Persistence: High-level tracking ensures that even complex moving objects remain consistent during the generated sequence.

The Role of Creative Agents in Video Production

The introduction of "Creative Agents" in April 2026 represents the next frontier of Adobe Firefly AI video creation. Unlike a standard chatbot, these agents are embedded within the Premiere Pro interface and have access to the project's metadata. An editor can ask the agent, "Find a 5-second B-roll clip of a bustling Tokyo street at night that matches my current color grade," and the agent will either find a match in Adobe Stock or generate a brand-new clip using Firefly.

As Business Wire noted, these agents are designed to act as a "creative partner." They can suggest alternative cuts, identify "dead air" in an interview, and automatically generate captions that are stylistically aligned with the video's theme. For solo content creators, this is like having a professional assistant editor, allowing them to focus on the storytelling and high-level creative decisions while the AI handles the technical execution.

This development is part of Adobe’s broader strategy to lead the "New Era of Creativity." By focusing on agency and control, Adobe ensures that the professional community remains at the center of the workflow. The AI doesn't make the final decision; it presents options and executes tasks based on the editor's explicit instructions, maintaining a "human-in-the-loop" philosophy that is critical for professional ethics and quality control.

Ethics, Transparency, and Content Credentials

As Adobe Firefly AI video creation becomes more powerful, the importance of ethical AI use has never been higher. Adobe has maintained its commitment to "commercially safe" AI by training its models on licensed content, such as Adobe Stock, and public domain works. This ensures that professionals can use Firefly-generated video in commercial projects without the risk of copyright infringement, a major differentiator in the 2026 market.

Every piece of video content touched by Firefly tools automatically includes Content Credentials. This digital "nutrition label" tracks the history of the asset, showing exactly which parts were generated or edited by AI. In an era of deepfakes and misinformation, this transparency is vital. According to Adobe's December 2025 update, these credentials are now cryptographically linked to the file, making them tamper-evident and providing peace of mind to both creators and viewers.

Is Adobe Firefly AI video creation free to use?

Adobe Firefly offers a tiered model. While there is a free version with limited "generative credits," the full suite of video tools and the "unlimited generations" feature introduced in 2025 require a Creative Cloud subscription or a standalone Firefly premium plan.

Can I use Firefly-generated videos for commercial work?

Yes, Adobe Firefly is specifically designed to be commercially safe. The models are trained on Adobe Stock and other licensed datasets, and Adobe provides indemnification for many enterprise users to ensure legal security.

What is the maximum resolution for Firefly AI videos in 2026?

As of the 2026 updates, Adobe Firefly supports native generative output up to 4K resolution. This is a significant improvement over the 1080p limits seen in earlier beta versions of the software.

Do I need a powerful computer to run these AI tools?

Most Adobe Firefly AI video creation tasks are processed in the cloud on Adobe’s servers. While a stable internet connection is required, the heavy lifting of the AI generation does not rely on your local GPU, though Premiere Pro still benefits from a powerful system for local timeline playback.

How does "Generative Extend" differ from traditional slow motion?

Unlike traditional slow motion, which stretches existing frames, Generative Extend uses AI to create entirely new, unique frames that continue the action or movement of the original clip, allowing for longer durations without losing quality or changing the speed of the action.

Comments ()