Mango AI Text to Video: Meta's 2026 Generative Revolution

The landscape of digital content creation has undergone a seismic shift as we move through 2026, and at the heart of this transformation is Mango AI text to video. Originally teased in late 2025 as a secret project within the halls of Meta Platforms, Mango has emerged as a cornerstone of generative media. By bridging the gap between simple text descriptions and high-fidelity cinematic output, this model represents a new era where the barrier between imagination and visual reality is virtually non-existent. As creators and enterprises alike look for more efficient ways to produce engaging video content, Mango AI stands out as the premier solution for 2026's demanding digital ecosystem.

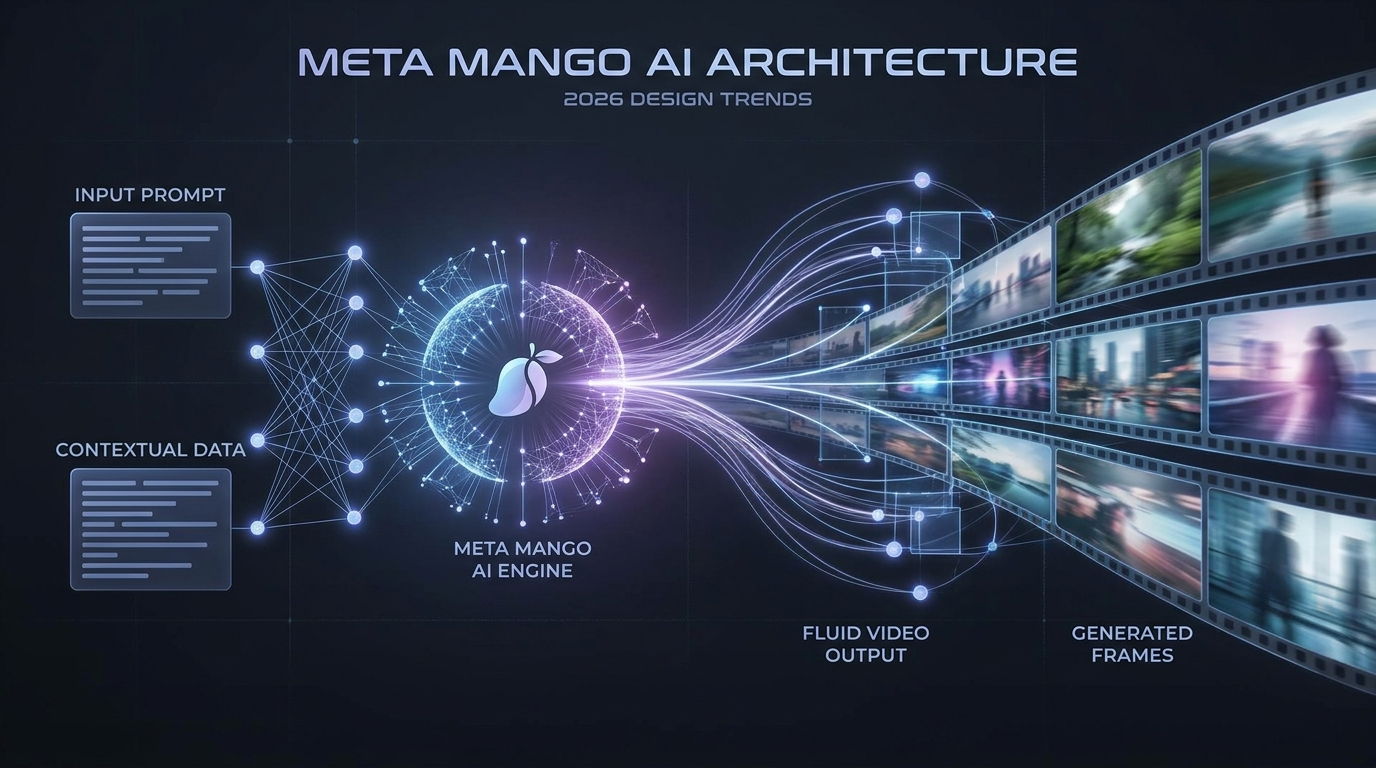

Mango AI text to video is Meta's next-generation generative AI model, launched in 2026, designed to create high-quality videos and images from text prompts. Developed alongside the "Avocado" text model, Mango utilizes advanced neural architectures to produce photorealistic motion, consistent character rendering, and professional-grade visual storytelling for creators and businesses.

- ✓ Mango AI is Meta’s flagship 2026 model for integrated image and video generation.

- ✓ It works in tandem with "Avocado," a specialized text model, for better prompt comprehension.

- ✓ The tool specializes in bringing static images to life and creating full-length video clips from scratch.

- ✓ Built-in safety features and watermarking ensure responsible AI usage in the 2026 media landscape.

The Evolution of Meta’s Mango AI Text to Video Technology

The journey of Mango AI began in late 2025 when reports from the Wall Street Journal and The Information first revealed Meta’s ambitious plans to compete at the highest levels of generative AI. Code-named ‘Mango’ during its development phase, the project was designed to solve the common pitfalls of earlier video models, such as temporal inconsistency and "morphing" artifacts. In 2026, the official rollout has proven that Meta’s investment in custom silicon and massive datasets has paid off, providing a tool that understands the nuances of physical motion and lighting better than any predecessor.

According to reports from the Wall Street Journal in December 2025, Meta specifically developed Mango to be a multimodal powerhouse. Unlike previous iterations that felt like experimental toys, the 2026 version of Mango AI text to video is built for production-grade environments. It doesn't just generate pixels; it understands the underlying geometry of the scene. This leap in logic allows for complex camera movements—such as sweeping drones shots or tight rack focuses—that were previously impossible to achieve through text prompts alone.

Furthermore, the synergy between Mango and its sibling model, Avocado, cannot be overstated. While Mango handles the visual synthesis, Avocado provides the linguistic intelligence. This dual-model approach ensures that when a user inputs a complex narrative prompt, the AI doesn't just pick out keywords; it understands the emotional subtext and the chronological flow of the requested scene. This makes Mango AI text to video a sophisticated director rather than just a rendering engine.

Key Features of Mango AI in 2026

One of the most talked-about features of the Mango AI suite is its ability to transform static images into dynamic talking avatars and cinematic sequences. As reported by PRUnderground in June 2025, early versions of Mango AI tools were already rolling out capabilities to bring static portraits to life with realistic lip-syncing and natural expressions. By 2026, this has evolved into full-body animation where the AI can infer movement from a single photograph, allowing creators to build entire scenes around a single piece of concept art.

High-Fidelity Video Synthesis

The core of the Mango AI text to video experience is its 8K resolution output. In 2026, the standard for social media and professional broadcasting has moved beyond 4K, and Mango meets this demand by generating crisp, noise-free textures. Whether it is the realistic texture of skin, the complex physics of flowing water, or the intricate details of a futuristic cityscape, the model maintains clarity across every frame. This is a significant milestone in generative AI, where

Comments ()