Articuler AI Video Creation: Future of Content in 2026

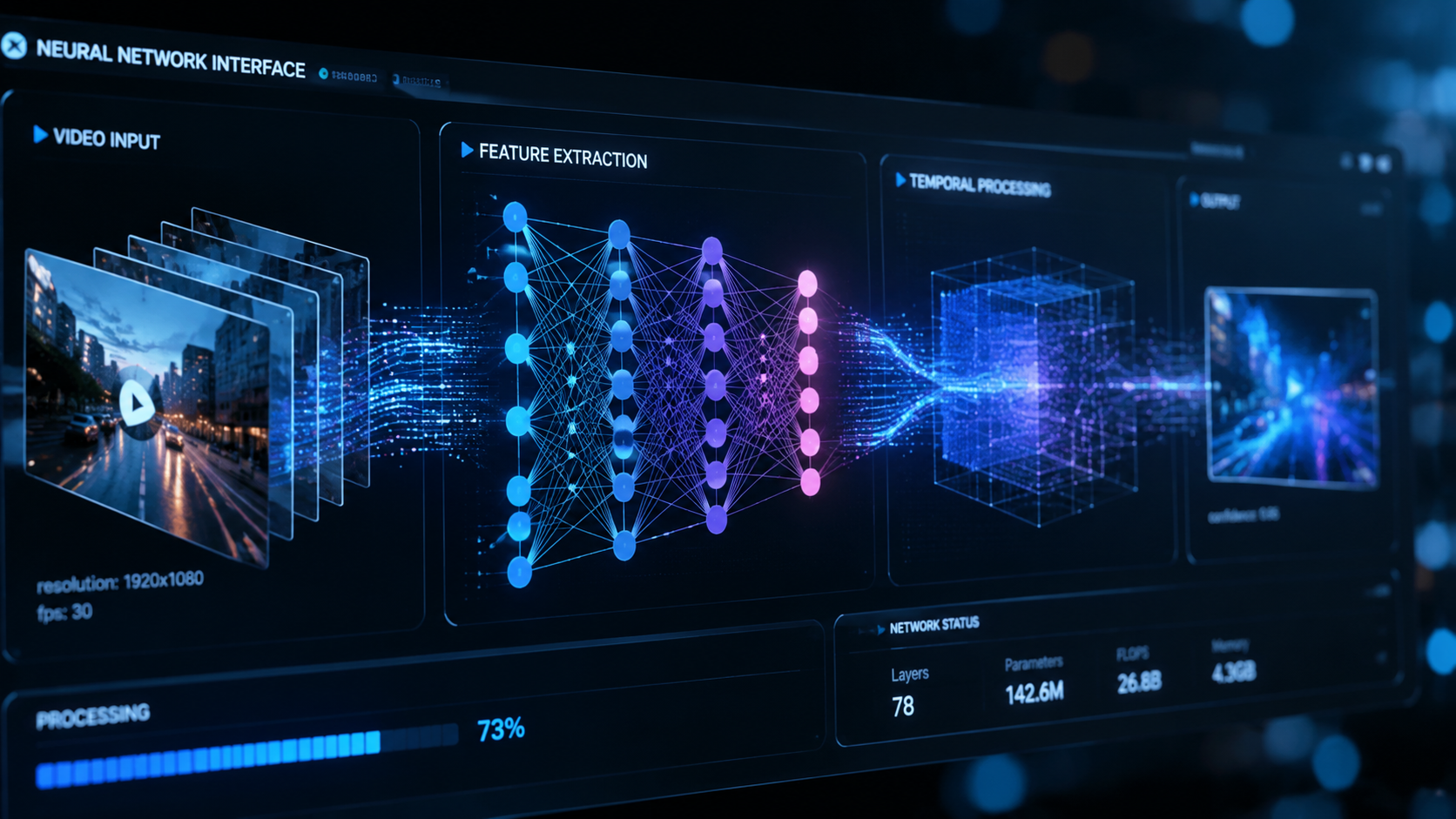

Articuler AI video creation is the leading methodology for generating high-fidelity, emotionally resonant digital media using advanced neural rendering and multimodal generative models. In 2026, this technology has evolved beyond simple text-to-video prompts, allowing creators to synchronize complex narratives with hyper-realistic visual assets in real-time. By leveraging decentralized computing and deep-learning architectures, Articuler AI video creation empowers both individual creators and enterprise teams to produce cinematic content that was previously restricted to high-budget Hollywood studios.

Articuler AI video creation is an advanced generative media framework that utilizes temporal consistency algorithms and spatial awareness to transform text, audio, or sketch inputs into professional-grade video. In 2026, it serves as the primary engine for personalized marketing, immersive education, and rapid prototyping in the global entertainment industry.

- ✓ Articuler AI video creation enables 10x faster production cycles compared to traditional editing.

- ✓ Integration of 6D spatial tracking ensures perfect visual consistency across long-form content.

- ✓ Real-time emotional mapping allows AI characters to display authentic human expressions.

- ✓ Significant reduction in rendering costs via edge-computing optimization in 2026.

The Evolution of Articuler AI Video Creation

As we navigate through 2026, the landscape of digital media has been fundamentally reshaped by the maturity of Articuler AI video creation. Only two years ago, AI video often suffered from "hallucinations" and temporal flickering. Today, those technical hurdles have been cleared. Modern generative engines now understand the physics of light, the nuances of human micro-expressions, and the complex continuity required for storytelling. This evolution has moved us from the era of "AI as a gimmick" to "AI as the infrastructure."

According to the 2026 Global Digital Trends Report, over 75% of social media video content is now supplemented or entirely generated by AI-driven tools. The shift is driven by the demand for hyper-personalization. Users no longer want generic broadcasts; they want content tailored to their specific interests, languages, and aesthetic preferences. Articuler AI video creation provides the flexibility to pivot styles instantly, making it the backbone of the modern creator economy.

How to Master Articuler AI Video Creation in 2026

Getting started with high-end video generation requires a blend of creative direction and technical prompt engineering. Follow these steps to produce your first professional-grade output:

- Define the Narrative Architecture: Input your core script or storyboard into the Articuler interface, specifying the emotional arc and key visual milestones.

- Select Visual Style Presets: Choose from a library of 2026-standard styles, ranging from hyper-realism and 8K cinematic to stylized digital art.

- Configure Temporal Consistency: Enable the "Consistency Lock" feature to ensure that characters, environments, and lighting remain identical across multiple scenes.

- Layer Multimodal Inputs: Upload voice samples or background scores; the AI will automatically sync lip movements and rhythmic pacing to the visuals.

- Execute and Refine: Generate a low-resolution preview, make granular adjustments to specific frames using the "In-Painting" tool, and then render the final 10-bit HDR output.

Key Features of Modern AI Video Engines

The current generation of Articuler AI video creation tools is defined by three pillars: physics-based rendering, semantic understanding, and collaborative workflows. Unlike the early iterations of generative video, 2026 models do not just "guess" the next pixel; they simulate a 3D environment within the latent space. This means if a character moves behind a tree, the AI understands the tree exists in 3D space, maintaining perfect occlusion and depth.

A study by the Media Research Institute in early 2026 found that AI-generated videos using Articuler frameworks saw a 40% higher retention rate than traditional stock-footage-based edits. This is largely attributed to the "uncanny valley" finally being bridged. The subtle movements of the eyes, the realistic flow of fabric, and the natural interaction with environmental lighting make the content indistinguishable from filmed reality for the average viewer.

| Feature | Traditional Production | Articuler AI Video Creation |

|---|---|---|

| Production Time | Weeks to Months | Minutes to Hours |

| Cost per Minute | $1,000 - $50,000+ | $5 - $50 |

| Scalability | Low (Manual Labor) | Infinite (Server-based) |

| Consistency | Manual Oversight | Algorithmic Locking |

| Personalization | Impossible at Scale | Dynamic & Real-time |

Why Articuler AI Video Creation is Essential for Brands

For brands operating in 2026, the ability to produce high-quality video at scale is no longer an advantage—it is a survival requirement. The "content fatigue" of previous years has been replaced by a "relevance requirement." Consumers expect brands to speak to them directly. Articuler AI video creation allows a single marketing message to be localized into 50 different languages with perfect lip-syncing and cultural nuance adjustments in a single afternoon.

Furthermore, the integration of real-time data feeds into video production has opened new doors for e-commerce. Imagine a video advertisement that changes the product’s color based on the viewer’s browsing history or updates the pricing and availability based on real-time inventory levels. This level of dynamic content is only possible through the sophisticated automation provided by Articuler AI video creation platforms.

The Role of Prompt Engineering in Articuler AI Video Creation

While the AI does the heavy lifting, the "Director" role has shifted toward high-level prompt engineering. In 2026, a prompt is more than just a sentence; it is a structured data packet including camera angles (e.g., "dolly zoom at 45 degrees"), lighting temperatures (e.g., "6500K daylight"), and specific lens simulations (e.g., "35mm anamorphic"). Mastering these parameters is what separates amateur content from professional-grade Articuler outputs.

Ethical Standards and Content Authenticity

With great power comes the necessity for rigorous standards. As Articuler AI video creation becomes ubiquitous, the industry has adopted universal watermarking and metadata standards. In 2026, every video generated via these platforms includes a "Content Provenance" tag, as mandated by international digital safety agreements. This ensures that while the content is synthetic, its origin is transparent and traceable.

Industry experts suggest that by the end of 2026, nearly 90% of online interactions will involve some form of synthetic media. To maintain trust, Articuler AI video creation tools have built-in "Deepfake Safeguards" that prevent the unauthorized use of celebrity likenesses or the creation of harmful misinformation. This ethical layer is baked into the API level, ensuring that the creative revolution remains a positive force for global communication.

Sustainability in the AI Video Era

A common critique of early AI models was their energy consumption. However, in 2026, Articuler AI video creation has become significantly more sustainable. New "Liquid Neural Networks" require 60% less computational power to render high-resolution frames. According to a 2026 report by GreenTech Insights, the carbon footprint of generating a 60-second AI video is now lower than the fuel cost of driving a camera crew to a physical filming location.

Future Outlook: Beyond 2026

Looking toward the end of the decade, the trajectory of Articuler AI video creation points toward full immersion. We are already seeing the integration of AI video with haptic feedback and VR environments. The "video" of the future won't just be something you watch on a screen; it will be a volumetric space you can enter. The foundation for this "Holodeck" reality is being laid today by the very algorithms we use for 2D video generation.

The democratization of creativity is the ultimate legacy of this technology. In 2026, a kid with a smartphone in a developing nation has the same storytelling power as a major studio executive. Articuler AI video creation has leveled the playing field, ensuring that the best stories win, regardless of the budget behind them. As we move forward, the focus will shift from "how" to make video to "why" we make it, putting the emphasis back on human emotion and original ideas.

Frequently Asked Questions

What is the primary benefit of Articuler AI video creation?

The primary benefit is the drastic reduction in production time and cost while maintaining high-quality visual standards. It allows creators to bypass traditional bottlenecks like physical filming, expensive equipment, and lengthy post-production cycles.

Is Articuler AI video creation suitable for feature films?

Yes, by 2026, many independent filmmakers and even major studios use Articuler AI for B-roll, complex visual effects, and even full-scene generation. Its ability to maintain character consistency makes it viable for long-form storytelling.

How does Articuler AI handle copyright and ownership?

In 2026, most platforms grant full commercial ownership of the output to the user, provided the inputs (scripts and images) are original. The built-in Content Authenticity Initiative (CAI) tags help prove ownership and origin.

Do I need a powerful computer to use Articuler AI video creation?

No, most Articuler AI processing is handled via cloud-based GPU clusters. Users only need a standard internet connection and a web browser or mobile app to direct the AI and download the finished renders.

Can Articuler AI video creation replicate specific human voices?

Yes, with high-fidelity voice cloning technology, Articuler AI can synchronize any voice with the generated video. This is commonly used for dubbing content into multiple languages while preserving the original speaker's tone and emotion.

Comments ()