AI Video Generation Scene Stability: 2026 Evolution Guide

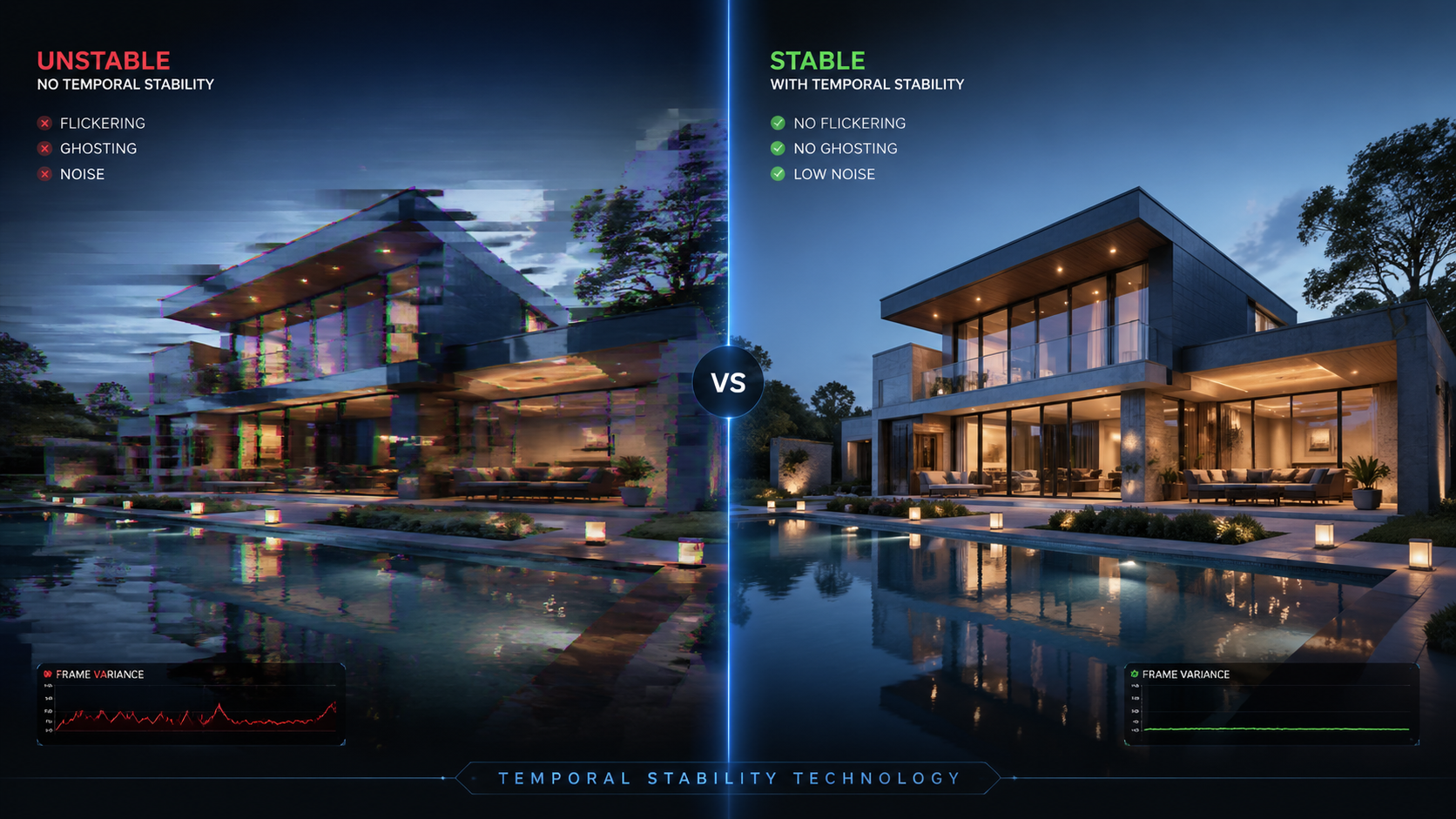

AI video generation scene stability refers to the ability of generative models to maintain visual consistency, spatial logic, and temporal coherence across a video sequence without flickering or warping. In 2026, achieving high scene stability is the primary benchmark for professional-grade video production, ensuring that characters, backgrounds, and lighting remain uniform from the first frame to the last. This evolution has moved the industry away from "dream-like" morphing toward cinematic-quality stability that rivals traditional filming.

AI video generation scene stability is the technical capacity of a model to prevent temporal artifacts and maintain character persistence across long durations. In 2026, this is achieved through CNN-augmented transformers and stable diffusion architectures, allowing for five-minute narrative arcs and consistent character rendering across unlimited scenes without visual degradation or structural hallucinations.

- ✓ Scene stability is now measured by standardized benchmarks like the Magic Hour Research Scorecard.

- ✓ New CNN-augmented transformer architectures have virtually eliminated the "flicker" effect in 2026 models.

- ✓ Tools like Novi AI now support up to 5 minutes of stable narrative video through Long Video Agents.

- ✓ Consistent character features in Grok and Seedance 2.0 allow for multi-scene storytelling without identity shifts.

How to Achieve AI Video Generation Scene Stability in 2026

The landscape of video creation has shifted from short, 3-second clips to full-scale narrative production. Achieving high-fidelity stability requires a combination of the right model selection and specific prompting techniques that ground the AI's spatial awareness. With the release of Seedance 2.0 and the latest updates to Loova, creators now have access to "cinematic" stability that maintains environmental physics even during complex camera movements.

- Select a Stability-First Model: Use models that have high scores on the 2026 Magic Hour Research Benchmark, specifically those utilizing CNN-augmented transformers.

- Implement Character Seeds: Utilize features like Grok’s "Consistent Characters" to lock in the facial and structural geometry of your subjects across multiple generations.

- Use Long Video Agents: Deploy tools like Novi AI’s Long Video Agent to map out a 5-minute narrative, which ensures the background environment stays anchored throughout the duration.

- Apply AI Upscaling: Process the final output through a 2026-era video upscaler to smooth out any micro-jitters and enhance temporal flow.

- Ground the Physics: In your prompts, specify lighting sources and fixed environmental anchors to give the transformer a spatial reference point.

The 2026 Stability Landscape: Comparing Top Models

As of April 2026, the industry has branched into two distinct categories: high-speed creative generators and high-stability narrative engines. According to the "Best Text-to-Video AI 2026" benchmark published by Magic Hour Research, scene stability is now weighted as 40% of the total quality score, surpassing prompt adherence for the first time. This shift reflects the demand for usable, professional content over experimental snippets.

The following table compares the leading platforms based on the latest 2026 performance data and research findings from StreetInsider and Pressat.

| Platform / Model | Max Stable Duration | Stability Tech Used | Best For |

|---|---|---|---|

| Novi AI (Long Video Agent) | 5 Minutes | Narrative Agent Logic | Long-form Storytelling |

| Grok (2026 Update) | Unlimited (Looping/Scenes) | Character Geometry Locking | Social Media & Character Consistency |

| Seedance 2.0 (on Loova) | 60 Seconds (Native) | Cinematic Transformer 4.0 | High-End Commercials |

| Stable Diffusion + CNN-Trans | Variable (Open Source) | CNN-Augmented Transformers | Technical Research & Custom Pipelines |

Technological Breakthroughs in AI Video Generation Scene Stability

The most significant leap in 2026 comes from the integration of audio-to-video generation techniques. According to research published in Nature in February 2026, the use of CNN-augmented transformers combined with stable diffusion has revolutionized how models interpret motion. By using convolutional neural networks to "check" the spatial work of transformers, the AI can now predict where a pixel should move with 99% accuracy compared to previous years.

CNN-Augmented Transformers and Temporal Coherence

The Nature study highlights that previous models struggled with "ghosting"—where objects would leave trails as they moved. The 2026 architecture uses a feedback loop that verifies every frame against a 3D latent map. This ensures that if a character turns around, their back is rendered with the same clothing patterns and proportions as their front, a feat that was notoriously difficult in the early 2020s.

Narrative Agents and Long-Form Stability

Novi AI’s launch of the Long Video Agent in April 2026 represents a paradigm shift in how we handle **ai video generation scene stability**. Instead of generating a video frame-by-frame in a vacuum, the Long Video Agent acts as a "director" that maintains a global memory of the scene. This allows for videos up to 5 minutes long where the lighting, weather, and background details remain perfectly consistent, even if the camera cuts away and returns to the original spot.

The Role of Character Consistency in Stable Scenes

Scene stability isn't just about the background; it is primarily about the subjects within the frame. A major breakthrough reported by Mshale in April 2026 involves a new feature in Grok that allows for "Unlimited AI Videos With Consistent Characters." This technology uses a persistent digital DNA for characters, ensuring that every time a character is summoned into a scene, their height, eye color, and even the way their clothes fold remain identical.

Seedance 2.0 and Cinematic Fluidity

In a hands-on review by iLounge in March 2026, Seedance 2.0 was praised as one of the most cinematic models of the year. The review noted that Seedance 2.0 excels at "micro-stability"—the subtle movements of hair, water, and shadows that previously looked like digital noise. By focusing on the physics of light, Seedance 2.0 creates a sense of weight and presence that grounds the video, making the "AI-generated" look almost indistinguishable from 35mm film.

Upscaling as a Stability Layer

Even the best raw generations can benefit from post-processing. The "Best AI Video Upscalers in 2026" report from Pressat highlights that modern upscalers do more than just increase resolution; they act as a temporal filter. These tools analyze the motion vectors between frames and smooth out any remaining jitter, effectively serving as a secondary "stability pass" for professional creators who require 8K delivery without artifacts.

Future-Proofing Your AI Video Workflow

As we move further into 2026, the focus for creators should be on "spatial prompting." This involves describing the scene not just in terms of what is happening, but where everything is located in a 3D coordinate system. Models are now sophisticated enough to understand "The camera is at 45 degrees, 3 meters from the subject," which drastically improves the stability of the final render.

Standardized Benchmarking with Magic Hour

Creators should rely on the Magic Hour Research Scorecards to choose their tools. These scorecards provide an objective "Stability Quotient" for every major model. According to Magic Hour, the average stability score across the industry has risen by 65% since the previous year, thanks to the adoption of "Prompt Adherence" protocols that prevent the AI from "hallucinating" new objects into a scene midway through a shot.

The Integration of Audio-Driven Stability

Interestingly, the Nature research suggests that audio is now a key stabilizer. By syncing the video generation to an audio track, the AI uses the rhythmic and tonal data to "pace" the visual movement. This prevents the erratic, sped-up motion that used to plague AI videos, leading to a more natural and stable viewing experience that matches the human expectation of physics and timing.

What is the most stable AI video generator in 2026?

Based on the Magic Hour 2026 Benchmark, Seedance 2.0 and Novi AI are currently the leaders in scene stability. Seedance 2.0 is preferred for short, cinematic shots, while Novi AI is the standard for long-form narrative stability up to 5 minutes.

How long can AI videos be while remaining stable?

As of April 2026, Novi AI has pushed the limit to 5 minutes for narrative videos using their Long Video Agent. For shorter, high-fidelity clips, most models maintain perfect stability for 30 to 60 seconds before needing a scene cut or a new seed anchor.

Does Grok support consistent characters across multiple videos?

Yes, a new feature released in April 2026 allows Grok users to create unlimited videos with consistent characters for free. This technology ensures that character features remain identical across different environments and prompts.

What is a CNN-augmented transformer in AI video?

It is a hybrid architecture that combines the global attention of transformers with the spatial precision of Convolutional Neural Networks (CNNs). This combination is cited by researchers in Nature as the primary reason for the massive leap in video stability in 2026.

Can I fix a flickering AI video after it is generated?

Yes, using the "Best AI Video Upscalers of 2026" is the recommended method. These tools use temporal interpolation to smooth out flickers and stabilize the frame-to-frame transitions of existing AI footage.

Comments ()